文章目录

**@Kubernetes Prometheus+Grafana 监控系统部署****1. 文档概述**1.1 目的1.2 组件架构

**2. 先决条件检查清单**2.1 环境要求检查2.2 资源需求2.3 工具安装验证

**3. 详细部署步骤**3.1 准备环境3.1.1 helm 快速安装3.2.1 自定义配置部署3.2.2 执行部署3.2.3 配置访问方式

3.2初始化配置

**4. Grafana配置**4.1 首次登录配置4.2 添加Prometheus数据源4.3 导入预置仪表板4.4 创建自定义仪表板

**5. 告警配置**5.1 内置告警规则检查5.2 创建自定义告警规则5.3 告警接收器配置5.4 应用告警配置

**6. 验证与测试**6.1 组件健康检查6.2 告警测试

**7. 日常维护**7.1 监控系统备份7.2 升级流程7.3 容量规划与扩容

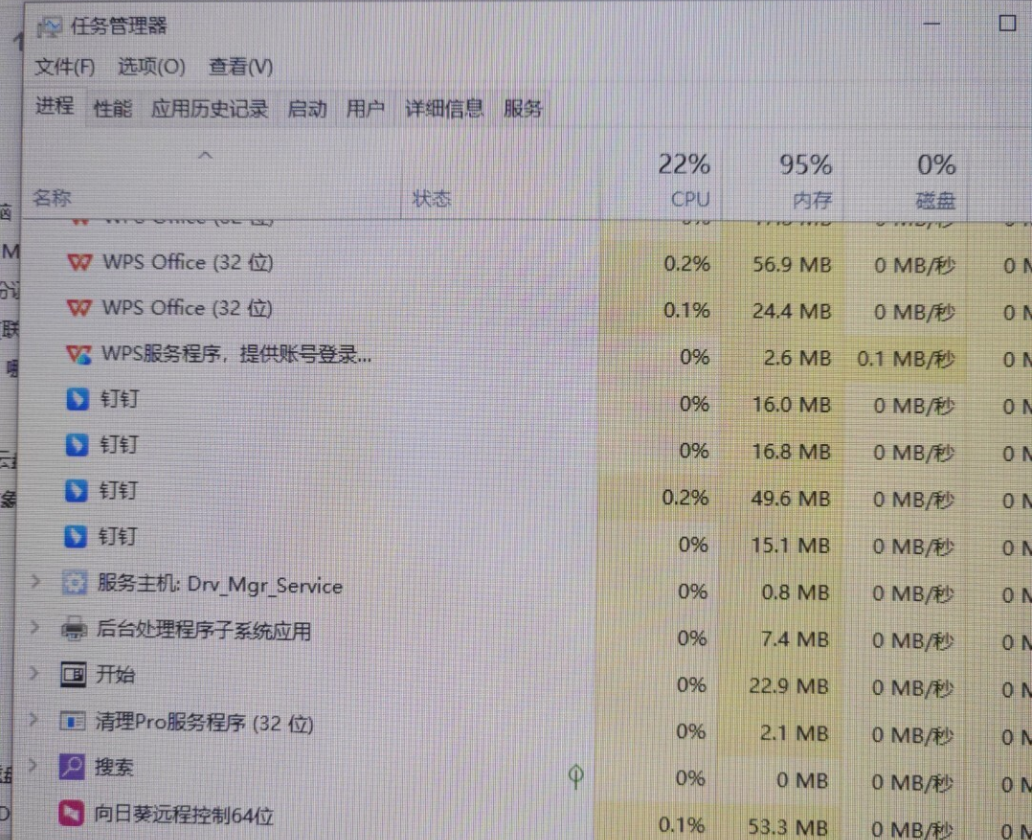

**8. 故障排除手册**8.1 常见问题及解决方案问题1:Pod处于Pending状态问题2:Prometheus无法抓取指标问题3:Grafana无法访问数据源问题4:Alertmanager不发送告警问题5:内存/磁盘使用率过高

8.2 紧急恢复流程

**9. 安全加固指南**9.1 基础安全配置9.2 网络策略9.3 RBAC最小权限

**10. 性能优化建议**10.1 Prometheus性能调优10.2 抓取优化

**11. 官方参考链接**11.1 核心文档11.2 最佳实践11.3 仪表板资源11.4 故障排除资源

**12. 附录**12.1 部署检查清单12.2 监控指标清单

@Kubernetes Prometheus+Grafana 监控系统部署

适用环境: Kubernetes 1.16+

1. 文档概述

1.1 目的

本文档提供在Kubernetes集群中部署Prometheus监控系统、Grafana可视化平台和Alertmanager告警组件的标准操作流程。

1.2 组件架构

┌─────────────────────────────────────────────────────────────┐

│ Kubernetes Cluster │

├──────────────┬───────────────┬──────────────┬───────────────┤

│ Prometheus │ Grafana │ Alertmanager │ Node Exporter│

│ (采集) │ (展示) │ (告警) │ (节点指标) │

└──────────────┴───────────────┴──────────────┴───────────────┘

│

┌─────────────────────────────────────────────────────────────┐

│ 数据流向: Metrics → Alert → Visualization │

└─────────────────────────────────────────────────────────────┘

2. 先决条件检查清单

2.1 环境要求检查

# 检查Kubernetes版本

kubectl version --short | grep Server

# 检查kubectl配置

kubectl cluster-info

# 检查节点资源

kubectl get nodes -o wide

kubectl describe nodes | grep -A 5 -B 5 "Allocatable"

# 检查存储类

kubectl get storageclass

2.2 资源需求

| 组件 | CPU需求 | 内存需求 | 存储需求 | 副本数 |

|---|---|---|---|---|

| Prometheus | 2核 | 4Gi | 50Gi | 1 |

| Grafana | 1核 | 1Gi | 10Gi | 1 |

| Alertmanager | 0.5核 | 256Mi | 5Gi | 3 |

| Node Exporter | 0.1核 | 100Mi | – | DaemonSet |

2.3 工具安装验证

# 1. Helm安装检查

helm version

# 预期输出:version.BuildInfo{Version:"v3.x.x"}

# 2. 如未安装Helm,执行安装

curl -fsSL -o get_helm.sh https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3

chmod 700 get_helm.sh

./get_helm.sh

# 3. 验证网络连通性(到GitHub和Docker Hub)

curl -I https://github.com

curl -I https://hub.docker.com

3. 详细部署步骤

3.1 准备环境

# 步骤1.1:创建监控命名空间

kubectl create namespace monitoring

kubectl label namespace monitoring name=monitoring

# 步骤1.2:添加Helm仓库

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

helm repo add grafana https://grafana.github.io/helm-charts

helm repo update

# 验证仓库添加成功

helm search repo prometheus-community

helm search repo grafana

3.1.1 helm 快速安装

################################################### kube-prometheus-stack ########################################

# 首先创建命名空间,如上已操作可忽略

kubectl create namespace monitoring

# 安装时指定命名空间

helm install prometheus prometheus-community/kube-prometheus-stack --namespace monitoring

# 查看服务

kubectl get svc -n monitoring

# 方式一:NodePort(测试环境)

## 修改Grafana服务类型

kubectl patch svc prometheus-grafana -n monitoring -p '{"spec": {"type": "NodePort"}}'

## 查看端口

kubectl get svc prometheus-grafana -n monitoring

## 修改Prometheus服务类型

kubectl patch svc prometheus-kube-prometheus-prometheus -n monitoring -p '{"spec": {"type": "NodePort"}}'

## 查看端口

kubectl get svc prometheus-kube-prometheus-prometheus -n monitoring

# 方式二:临时访问方式(测试环境)

## Prometheus UI:

kubectl port-forward svc/prometheus-kube-prometheus-prometheus 9090:9090 -n monitoring然后在浏览器中访问 http://localhost:9090。

## Grafana UI:

kubectl port-forward svc/prometheus-grafana 3000:80 -n monitoring然后在浏览器中访问 http://localhost:3000。

# 转发Grafana端口

kubectl port-forward svc/prometheus-grafana -n monitoring 3000:80

# 转发Prometheus端口

kubectl port-forward svc/prometheus-operated -n monitoring 9090:9090

# 转发Alertmanager端口

kubectl port-forward svc/prometheus-prometheus-oper-alertmanager -n monitoring 9093:9093

# 方式三:Ingress(生产环境)

## 创建ingress.yaml

cat > grafana-ingress.yaml << EOF

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: grafana-ingress

namespace: monitoring

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

ingressClassName: nginx

rules:

- host: grafana.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: prometheus-grafana

port:

number: 80

EOF

## 部署ingress

kubectl apply -f grafana-ingress.yaml

# 注:***

## grafana默认用户是 admin,密码可以通过以下命令获取

kubectl --namespace monitoring get secrets prometheus-grafana -o jsonpath="{.data.admin-password}" | base64 -d ; echo

## 自定义配置

helm show values prometheus-community/kube-prometheus-stack > custom-values.yaml # 使用自定义的 values.yaml文件,直接使用 helm install会采用 Chart 的默认配置。自定义设置(如持久化存储、配置抓取规则等)

## 使用你的自定义文件进行安装,编辑 values.yaml文件,根据需求修改配置文件

helm install prometheus prometheus-community/kube-prometheus-stack -f custom-values.yaml --namespace monitoring

3.2.1 自定义配置部署

# 创建配置目录

mkdir -p ~/prometheus-deploy && cd ~/prometheus-deploy

# 步骤2.1:创建主配置文件

cat > prometheus-stack-values.yaml << 'EOF'

# kube-prometheus-stack values.yaml

# 版本: 45.0.0

global:

imagePullSecrets: []

imagePullPolicy: IfNotPresent

# Grafana配置

grafana:

enabled: true

adminPassword: "ChangeMe123!" # 第一次登录后必须修改

persistence:

enabled: true

storageClassName: "standard"

accessModes: ["ReadWriteOnce"]

size: 10Gi

ingress:

enabled: false # 生产环境建议启用

# 如果启用,配置以下

# hosts:

# - grafana.yourdomain.com

# annotations:

# kubernetes.io/ingress.class: nginx

# cert-manager.io/cluster-issuer: letsencrypt-prod

plugins:

- grafana-piechart-panel

# 重要:禁用匿名访问

grafana.ini:

security:

disable_initial_admin_creation: false

admin_password: ${ADMIN_PASSWORD}

auth:

disable_login_form: false

disable_signout_menu: false

auth.anonymous:

enabled: false

# Prometheus配置

prometheus:

prometheusSpec:

retention: 15d # 生产环境建议30d

retentionSize: "50GiB"

storageSpec:

volumeClaimTemplate:

spec:

storageClassName: "standard"

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 50Gi

podMonitorSelectorNilUsesHelmValues: false

serviceMonitorSelectorNilUsesHelmValues: false

# 资源限制

resources:

requests:

memory: 2Gi

cpu: 1000m

limits:

memory: 4Gi

cpu: 2000m

# 告警规则自动发现

ruleSelectorNilUsesHelmValues: false

# 远程写入配置(可选)

# remoteWrite:

# - url: "http://thanos-receive:19291/api/v1/receive"

# Alertmanager配置

alertmanager:

enabled: true

config:

global:

resolve_timeout: 5m

smtp_smarthost: 'smtp.gmail.com:587'

smtp_from: 'alerts@yourcompany.com'

smtp_auth_username: 'user@gmail.com'

smtp_auth_password: 'password'

smtp_require_tls: true

route:

group_by: ['alertname', 'cluster']

group_wait: 30s

group_interval: 5m

repeat_interval: 12h

receiver: 'default-receiver'

routes:

- match:

severity: critical

receiver: 'critical-receiver'

group_interval: 1m

repeat_interval: 5m

receivers:

- name: 'default-receiver'

email_configs:

- to: 'devops@yourcompany.com'

send_resolved: true

- name: 'critical-receiver'

email_configs:

- to: 'oncall@yourcompany.com'

send_resolved: true

pagerduty_configs:

- service_key: '<pagerduty-service-key>'

alertmanagerSpec:

storage:

volumeClaimTemplate:

spec:

storageClassName: "standard"

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 5Gi

# Node Exporter配置(收集节点指标)

prometheus-node-exporter:

enabled: true

hostRootfsMount: false # 避免挂载主机根文件系统

tolerations:

- operator: "Exists"

resources:

requests:

memory: 100Mi

cpu: 100m

# kube-state-metrics配置

kube-state-metrics:

enabled: true

resources:

requests:

memory: 200Mi

cpu: 100m

limits:

memory: 500Mi

cpu: 500m

# Prometheus Operator配置

prometheusOperator:

enabled: true

admissionWebhooks:

enabled: true

failurePolicy: Fail

tls:

enabled: true

resources:

requests:

memory: 256Mi

cpu: 200m

limits:

memory: 512Mi

cpu: 500m

# 默认抓取配置

defaultRules:

create: true

rules:

alertmanager: true

etcd: true

general: true

k8s: true

kubeApiserver: true

kubePrometheusNodeAlerting: true

kubePrometheusNodeRecording: true

kubernetesAbsent: true

kubernetesApps: true

kubernetesResources: true

kubernetesStorage: true

kubernetesSystem: true

node: true

prometheus: true

prometheusOperator: true

EOF

# 步骤2.2:创建密码Secret(生产环境推荐)

cat > grafana-secret.yaml << EOF

apiVersion: v1

kind: Secret

metadata:

name: grafana-admin-credentials

namespace: monitoring

type: Opaque

stringData:

admin-password: ChangeMe123!

admin-user: admin

EOF

# 步骤2.3:创建网络策略(可选,但推荐)

cat > network-policy.yaml << EOF

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: allow-monitoring-namespace

namespace: monitoring

spec:

podSelector: {}

ingress:

- from:

- namespaceSelector:

matchLabels:

name: monitoring

- podSelector: {}

egress:

- to:

- ipBlock:

cidr: 0.0.0.0/0

except:

- 10.0.0.0/8

- 172.16.0.0/12

- 192.168.0.0/16

EOF

3.2.2 执行部署

# 步骤3.1:应用网络策略

kubectl apply -f network-policy.yaml

# 步骤3.2:安装kube-prometheus-stack

helm install prometheus-stack prometheus-community/kube-prometheus-stack

--namespace monitoring

--version 45.0.0

-f prometheus-stack-values.yaml

--wait

--timeout 10m

--debug

# 步骤3.3:验证安装

echo "=== 检查Pod状态 ==="

kubectl get pods -n monitoring -w

# 按Ctrl+C退出watch模式后继续

echo "=== 检查服务状态 ==="

kubectl get svc -n monitoring

echo "=== 检查持久卷声明 ==="

kubectl get pvc -n monitoring

echo "=== 检查配置映射 ==="

kubectl get configmaps -n monitoring

3.2.3 配置访问方式

# 方案A:端口转发(开发/测试)

# 开启3个终端分别执行:

# 终端1 - Grafana

kubectl port-forward svc/prometheus-stack-grafana -n monitoring 3000:80

# 终端2 - Prometheus

kubectl port-forward svc/prometheus-stack-kube-prom-prometheus -n monitoring 9090:9090

# 终端3 - Alertmanager

kubectl port-forward svc/prometheus-stack-kube-prom-alertmanager -n monitoring 9093:9093

# 方案B:NodePort(临时访问)

cat > expose-services.yaml << EOF

apiVersion: v1

kind: Service

metadata:

name: grafana-external

namespace: monitoring

spec:

type: NodePort

selector:

app.kubernetes.io/instance: prometheus-stack

app.kubernetes.io/name: grafana

ports:

- port: 80

targetPort: 3000

nodePort: 30080

---

apiVersion: v1

kind: Service

metadata:

name: prometheus-external

namespace: monitoring

spec:

type: NodePort

selector:

app: prometheus

prometheus: prometheus-stack-kube-prom-prometheus

ports:

- port: 9090

targetPort: 9090

nodePort: 30090

EOF

kubectl apply -f expose-services.yaml

# 方案C:Ingress(生产环境推荐)

cat > monitoring-ingress.yaml << EOF

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: monitoring-ingress

namespace: monitoring

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

nginx.ingress.kubernetes.io/ssl-redirect: "true"

cert-manager.io/cluster-issuer: "letsencrypt-prod"

spec:

ingressClassName: nginx

tls:

- hosts:

- grafana.yourdomain.com

- prometheus.yourdomain.com

- alertmanager.yourdomain.com

secretName: monitoring-tls

rules:

- host: grafana.yourdomain.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: prometheus-stack-grafana

port:

number: 80

- host: prometheus.yourdomain.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: prometheus-stack-kube-prom-prometheus

port:

number: 9090

- host: alertmanager.yourdomain.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: prometheus-stack-kube-prom-alertmanager

port:

number: 9093

EOF

# kubectl apply -f monitoring-ingress.yaml

3.2初始化配置

# 等待所有Pod就绪(重要!)

kubectl wait --for=condition=ready pod -l app.kubernetes.io/name=grafana -n monitoring --timeout=300s

kubectl wait --for=condition=ready pod -l app=prometheus -n monitoring --timeout=300s

echo "=== 所有组件就绪,开始初始化配置 ==="

4. Grafana配置

4.1 首次登录配置

访问地址: http://localhost:3000(端口转发)或您的Ingress地址默认凭据:

用户名:

admin

ChangeMe123!

4.2 添加Prometheus数据源

# 方法A:通过API配置(推荐自动化)

cat > datasource-prometheus.yaml << 'EOF'

apiVersion: v1

kind: ConfigMap

metadata:

name: grafana-datasource-prometheus

namespace: monitoring

labels:

grafana_datasource: "1"

data:

prometheus.yaml: |-

apiVersion: 1

datasources:

- name: Prometheus

type: prometheus

access: proxy

url: http://prometheus-stack-kube-prom-prometheus.monitoring.svc.cluster.local:9090

isDefault: true

editable: true

jsonData:

timeInterval: 15s

queryTimeout: 60s

httpMethod: POST

manageAlerts: true

prometheusType: Prometheus

prometheusVersion: 2.37.0

cacheLevel: "High"

incrementalQueryOverlapWindow: "10m"

version: 1

EOF

kubectl apply -f datasource-prometheus.yaml

# 重启Grafana使配置生效

kubectl rollout restart deployment/prometheus-stack-grafana -n monitoring

4.3 导入预置仪表板

# 方法A:通过ConfigMap批量导入(推荐)

cat > grafana-dashboards-cm.yaml << 'EOF'

apiVersion: v1

kind: ConfigMap

metadata:

name: grafana-dashboards

namespace: monitoring

labels:

grafana_dashboard: "1"

data:

kubernetes-cluster.json: |-

{

"annotations": {

"list": [

{

"builtIn": 1,

"datasource": "-- Grafana --",

"enable": true,

"hide": true,

"iconColor": "rgba(0, 211, 255, 1)",

"name": "Annotations & Alerts",

"type": "dashboard"

}

]

},

"editable": true,

"gnetId": null,

"graphTooltip": 0,

"id": 2,

"links": [],

"panels": [

// 这里可以放置完整的仪表板JSON

// 建议从 https://grafana.com/grafana/dashboards/ 下载

],

"refresh": "10s",

"schemaVersion": 27,

"style": "dark",

"tags": ["kubernetes", "prometheus"],

"templating": {

"list": []

},

"time": {

"from": "now-1h",

"to": "now"

},

"timepicker": {},

"timezone": "",

"title": "Kubernetes Cluster Monitoring",

"uid": "kubernetes-cluster",

"version": 1

}

kubernetes-nodes.json: |-

{

"title": "Kubernetes Nodes",

"uid": "node-cluster-usage",

"version": 1

// 简化,实际需要完整JSON

}

EOF

# 方法B:通过Grafana UI导入

# 1. 导航到 "+" → Import

# 2. 输入以下仪表板ID之一:

# - 3119: Kubernetes cluster monitoring (推荐)

# - 6417: Node Exporter Full

# - 315: Kubernetes cluster monitoring (older)

# - 8588: Kubernetes API server

# 3. 选择Prometheus数据源

# 4. 点击Import

4.4 创建自定义仪表板

# 创建运维团队专用仪表板

cat > custom-ops-dashboard.yaml << 'EOF'

apiVersion: v1

kind: ConfigMap

metadata:

name: custom-ops-dashboard

namespace: monitoring

labels:

grafana_dashboard: "1"

data:

ops-dashboard.json: |-

{

"dashboard": {

"id": null,

"title": "运维监控总览",

"tags": ["production", "ops"],

"timezone": "browser",

"panels": [],

"time": {

"from": "now-6h",

"to": "now"

},

"refresh": "30s"

},

"overwrite": true

}

EOF

kubectl apply -f custom-ops-dashboard.yaml

5. 告警配置

5.1 内置告警规则检查

# 查看已安装的告警规则

kubectl get prometheusrules -n monitoring

# 查看特定规则组

kubectl get prometheusrules prometheus-stack-kube-prom-prometheus -n monitoring -o yaml | grep -A 20 "groups:"

# 检查告警是否触发

kubectl port-forward svc/prometheus-stack-kube-prom-prometheus -n monitoring 9090:9090

# 浏览器访问 http://localhost:9090/alerts

5.2 创建自定义告警规则

# 创建critical-alerts.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

name: custom-critical-alerts

namespace: monitoring

labels:

release: prometheus-stack

app: kube-prometheus-stack

component: rules

spec:

groups:

- name: critical.alerts

interval: 30s

rules:

- alert: KubePodCrashLooping

expr: |

rate(kube_pod_container_status_restarts_total{job="kube-state-metrics"}[15m]) * 60 * 15 > 0

for: 5m

labels:

severity: critical

team: kubernetes

annotations:

summary: "Pod {{ $labels.namespace }}/{{ $labels.pod }} 正在频繁重启"

description: |

Pod {{ $labels.namespace }}/{{ $labels.pod }} (Container: {{ $labels.container }})

在过去15分钟内重启了 {{ $value | humanize }} 次。

可能原因:内存不足、配置错误、依赖服务不可用。

runbook_url: "https://wiki.company.com/runbooks/pod-crashlooping"

dashboard: "https://grafana.company.com/d/kubernetes-pods"

- alert: KubeNodeNotReady

expr: |

kube_node_status_condition{condition="Ready",status="true"} == 0

for: 10m

labels:

severity: critical

annotations:

summary: "Node {{ $labels.node }} 不可用超过10分钟"

description: |

节点 {{ $labels.node }} 的Ready状态为false。

影响:运行在该节点上的Pod将无法调度。

紧急处理措施:检查节点状态,必要时重启节点。

- alert: KubeCPUOvercommit

expr: |

sum(namespace_cpu:kube_pod_container_resource_requests:sum{}) / sum(kube_node_status_allocatable_cpu_cores) > 1.5

for: 5m

labels:

severity: warning

annotations:

summary: "集群CPU资源过度分配 ({{ $value | humanizePercentage }})"

description: |

集群请求的CPU资源是可用资源的 {{ $value | humanizePercentage }}。

可能导致节点压力过大,Pod调度失败。

- alert: KubeMemoryOvercommit

expr: |

sum(namespace_memory:kube_pod_container_resource_requests:sum{}) / sum(kube_node_status_allocatable_memory_bytes) > 1.5

for: 5m

labels:

severity: warning

annotations:

summary: "集群内存资源过度分配 ({{ $value | humanizePercentage }})"

- alert: KubeAPIDown

expr: |

apiserver:up == 0

for: 1m

labels:

severity: critical

annotations:

summary: "Kubernetes API服务器不可用"

description: |

无法连接到Kubernetes API服务器。

影响:所有kubectl操作、Pod调度、服务发现将失败。

紧急处理:检查API服务器Pod、网络策略、证书。

- alert: KubeletDown

expr: |

kubelet:up == 0

for: 5m

labels:

severity: warning

annotations:

summary: "Kubelet {{ $labels.instance }} 不可用"

- alert: PrometheusTargetMissing

expr: |

up == 0

for: 3m

labels:

severity: warning

annotations:

summary: "Prometheus监控目标丢失: {{ $labels.job }} / {{ $labels.instance }}"

description: |

监控目标 {{ $labels.job }}/{{ $labels.instance }} 已超过3分钟未响应。

可能原因:服务下线、网络问题、认证失败。

5.3 告警接收器配置

# alertmanager-config.yaml

apiVersion: v1

kind: Secret

metadata:

name: alertmanager-config-override

namespace: monitoring

type: Opaque

stringData:

alertmanager.yml: |

global:

smtp_smarthost: 'smtp.gmail.com:587'

smtp_from: 'k8s-alerts@yourcompany.com'

smtp_auth_username: 'alerts@yourcompany.com'

smtp_auth_password: 'your-app-specific-password'

smtp_require_tls: true

slack_api_url: 'https://hooks.slack.com/services/T00000000/B00000000/XXXXXXXXXXXXXXXXXXXXXXXX'

pagerduty_url: 'https://events.pagerduty.com/v2/enqueue'

opsgenie_api_url: 'https://api.opsgenie.com/v2/alerts'

victorops_api_url: 'https://alert.victorops.com/integrations/generic/20131114/alert'

templates:

- '/etc/alertmanager/templates/*.tmpl'

route:

group_by: ['alertname', 'cluster', 'severity']

group_wait: 30s

group_interval: 5m

repeat_interval: 4h

receiver: 'slack-notifications'

routes:

- match:

severity: critical

receiver: 'pagerduty-critical'

group_wait: 10s

group_interval: 1m

repeat_interval: 30m

continue: true

- match:

severity: warning

receiver: 'slack-warnings'

group_wait: 30s

group_interval: 5m

repeat_interval: 2h

- match:

namespace: production

receiver: 'production-team'

- match:

alertname: KubeAPIDown

receiver: 'sre-pager'

receivers:

- name: 'slack-notifications'

slack_configs:

- channel: '#k8s-alerts'

title: '{{ .GroupLabels.severity | upper }}: {{ .GroupLabels.alertname }}'

text: |

{{ range .Alerts }}

*Alert:* {{ .Annotations.summary }}

*Description:* {{ .Annotations.description }}

*Graph:* <{{ .GeneratorURL }}|:chart_with_upwards_trend:>

*Runbook:* <{{ .Annotations.runbook_url }}|:spiral_note_pad:>

*Dashboard:* <{{ .Annotations.dashboard }}|:bar_chart:>

*Details:*

{{ range .Labels.SortedPairs }} • {{ .Name }}: {{ .Value }}

{{ end }}

{{ end }}

send_resolved: true

icon_emoji: ':kubernetes:'

username: 'K8s-AlertManager'

- name: 'pagerduty-critical'

pagerduty_configs:

- service_key: 'your-pagerduty-integration-key'

description: '{{ .GroupLabels.alertname }}'

details:

severity: '{{ .GroupLabels.severity }}'

cluster: '{{ .GroupLabels.cluster }}'

summary: '{{ .CommonAnnotations.summary }}'

send_resolved: true

- name: 'email-alerts'

email_configs:

- to: 'sre-team@yourcompany.com'

from: 'k8s-alerts@yourcompany.com'

smarthost: 'smtp.gmail.com:587'

auth_username: 'alerts@yourcompany.com'

auth_password: 'your-password'

headers:

Subject: '[{{ .GroupLabels.severity }}] {{ .GroupLabels.alertname }}'

html: |

<h2>Kubernetes Alert: {{ .GroupLabels.alertname }}</h2>

<h3>Severity: {{ .GroupLabels.severity }}</h3>

<p><strong>Summary:</strong> {{ .CommonAnnotations.summary }}</p>

<p><strong>Description:</strong> {{ .CommonAnnotations.description }}</p>

<p><strong>Cluster:</strong> {{ .GroupLabels.cluster }}</p>

<p><strong>Affected Namespace:</strong> {{ .GroupLabels.namespace }}</p>

<p><a href="{{ .GeneratorURL }}">View in Prometheus</a></p>

<p><a href="{{ .CommonAnnotations.dashboard }}">View in Dashboard</a></p>

<p><a href="{{ .CommonAnnotations.runbook_url }}">Runbook</a></p>

send_resolved: true

- name: 'webhook-receiver'

webhook_configs:

- url: 'http://webhook-server:8080/alerts'

send_resolved: true

- name: 'null'

inhibit_rules:

- source_match:

severity: 'critical'

target_match:

severity: 'warning'

equal: ['alertname', 'cluster', 'namespace']

- source_match:

severity: 'critical'

target_match:

severity: 'info'

equal: ['alertname', 'cluster']

5.4 应用告警配置

# 应用Alertmanager配置

kubectl apply -f alertmanager-config.yaml

# 应用自定义告警规则

kubectl apply -f critical-alerts.yaml

# 重启Alertmanager使配置生效

kubectl rollout restart statefulset/prometheus-stack-kube-prom-alertmanager -n monitoring

# 验证配置

kubectl port-forward svc/prometheus-stack-kube-prom-alertmanager -n monitoring 9093:9093

# 访问 http://localhost:9093 检查配置

6. 验证与测试

6.1 组件健康检查

#!/bin/bash

# monitoring-health-check.sh

echo "=== Kubernetes监控系统健康检查 ==="

echo "检查时间: $(date)"

echo

# 1. 检查所有Pod

echo "1. Pod状态检查:"

kubectl get pods -n monitoring -o wide

echo

# 2. 检查Pod日志错误

echo "2. Pod日志检查:"

for pod in $(kubectl get pods -n monitoring -o name); do

echo "--- $pod ---"

kubectl logs -n monitoring $pod --tail=5 | grep -E "(error|ERROR|Error|panic|fatal)" | head -5 || echo "无错误日志"

done

echo

# 3. 检查服务端点

echo "3. 服务端点检查:"

kubectl get endpoints -n monitoring

echo

# 4. 检查PVC状态

echo "4. 存储状态检查:"

kubectl get pvc -n monitoring

kubectl get pv | grep monitoring

echo

# 5. 检查资源配置

echo "5. 资源使用情况:"

kubectl top pods -n monitoring

echo

# 6. 检查Prometheus目标

echo "6. Prometheus目标状态:"

kubectl port-forward svc/prometheus-stack-kube-prom-prometheus -n monitoring 9090:9090 &

sleep 5

curl -s "http://localhost:9090/api/v1/targets" | jq -r '.data.activeTargets[] | select(.health=="down") | .labels' || echo "所有目标正常"

pkill -f "port-forward.*9090"

echo

# 7. 检查告警规则

echo "7. 告警规则状态:"

kubectl get prometheusrules -n monitoring

echo

echo "=== 健康检查完成 ==="

6.2 告警测试

# 创建测试告警Pod

cat > test-alert-pod.yaml << EOF

apiVersion: v1

kind: Pod

metadata:

name: alert-test-pod

namespace: default

annotations:

test: "true"

spec:

restartPolicy: Always

containers:

- name: stress

image: polinux/stress

command: ["stress"]

args: ["--vm", "1", "--vm-bytes", "256M", "--vm-hang", "1"]

resources:

requests:

memory: "100Mi"

cpu: "100m"

limits:

memory: "200Mi"

cpu: "200m"

EOF

# 应用并观察告警

kubectl apply -f test-alert-pod.yaml

# 等待30秒后检查告警

sleep 30

kubectl port-forward svc/prometheus-stack-kube-prom-prometheus -n monitoring 9090:9090 &

echo "请在浏览器访问: http://localhost:9090/alerts"

echo "查找 KubePodCrashLooping 告警"

# 清理测试Pod

kubectl delete pod alert-test-pod

7. 日常维护

7.1 监控系统备份

#!/bin/bash

# backup-monitoring-config.sh

BACKUP_DIR="/backup/monitoring/$(date +%Y%m%d)"

mkdir -p $BACKUP_DIR

echo "开始备份监控系统配置..."

# 1. 备份Helm values

helm get values prometheus-stack -n monitoring > $BACKUP_DIR/helm-values.yaml

# 2. 备份所有配置资源

kubectl get prometheusrules -n monitoring -o yaml > $BACKUP_DIR/prometheus-rules.yaml

kubectl get servicemonitors -n monitoring -o yaml > $BACKUP_DIR/service-monitors.yaml

kubectl get podmonitors -n monitoring -o yaml > $BACKUP_DIR/pod-monitors.yaml

# 3. 备份Alertmanager配置

kubectl get secret alertmanager-config-override -n monitoring -o yaml > $BACKUP_DIR/alertmanager-config.yaml

# 4. 备份Grafana仪表板配置

kubectl get configmaps -l grafana_dashboard=1 -n monitoring -o yaml > $BACKUP_DIR/grafana-dashboards.yaml

kubectl get configmaps -l grafana_datasource=1 -n monitoring -o yaml > $BACKUP_DIR/grafana-datasources.yaml

# 5. 备份所有YAML文件

kubectl get all -n monitoring -o yaml > $BACKUP_DIR/all-resources.yaml

# 6. 压缩备份

tar -czf $BACKUP_DIR.tar.gz -C $(dirname $BACKUP_DIR) $(basename $BACKUP_DIR)

echo "备份完成: $BACKUP_DIR.tar.gz"

echo "文件列表:"

ls -la $BACKUP_DIR/

7.2 升级流程

#!/bin/bash

# upgrade-monitoring-stack.sh

set -e

echo "=== 监控系统升级流程 ==="

echo "当前版本:"

helm list -n monitoring

# 1. 备份当前配置

./backup-monitoring-config.sh

# 2. 检查新版本

echo "检查可用版本..."

helm search repo prometheus-community/kube-prometheus-stack --versions | head -10

read -p "请输入要升级的版本号: " VERSION

# 3. 拉取新版本values

helm pull prometheus-community/kube-prometheus-stack --version $VERSION --untar

cd kube-prometheus-stack

# 4. 比较配置差异

echo "比较配置差异..."

helm get values prometheus-stack -n monitoring > current-values.yaml

diff -u current-values.yaml values.yaml || true

read -p "是否继续升级? (y/n): " -n 1 -r

echo

if [[ ! $REPLY =~ ^[Yy]$ ]]; then

exit 1

fi

# 5. 执行升级

echo "执行升级..."

helm upgrade prometheus-stack prometheus-community/kube-prometheus-stack

--namespace monitoring

--version $VERSION

-f prometheus-stack-values.yaml

--reuse-values

--wait

--timeout 15m

--atomic

--debug

# 6. 验证升级

echo "验证升级..."

kubectl get pods -n monitoring -w

echo "升级完成!"

7.3 容量规划与扩容

# 检查当前使用情况

kubectl exec -n monitoring prometheus-stack-kube-prom-prometheus-0 -- du -sh /prometheus

# Prometheus数据保留策略调整

cat > prometheus-retention-patch.yaml << EOF

spec:

retention: 30d

retentionSize: 100GiB

EOF

kubectl patch prometheus prometheus-stack-kube-prom-prometheus -n monitoring --type merge -p "$(cat prometheus-retention-patch.yaml)"

# Grafana存储扩容

cat > grafana-pvc-patch.yaml << EOF

spec:

resources:

requests:

storage: 20Gi

EOF

kubectl patch pvc grafana-storage -n monitoring --type merge -p "$(cat grafana-pvc-patch.yaml)"

8. 故障排除手册

8.1 常见问题及解决方案

问题1:Pod处于Pending状态

# 诊断步骤

kubectl describe pod <pod-name> -n monitoring

# 常见原因和解决方案:

# 原因A:资源不足

# 解决方案:

kubectl describe nodes | grep -A 10 "Allocated resources"

# 清理资源或扩容节点

# 原因B:PVC挂载失败

# 解决方案:

kubectl get pvc -n monitoring

kubectl describe pvc <pvc-name> -n monitoring

# 检查StorageClass,确保存储后端正常

# 原因C:节点选择器/污点

# 解决方案:

kubectl get nodes --show-labels

kubectl describe node <node-name> | grep -i taint

# 添加容忍或调整节点选择器

问题2:Prometheus无法抓取指标

# 诊断步骤

kubectl port-forward svc/prometheus-stack-kube-prom-prometheus -n monitoring 9090:9090

# 访问 http://localhost:9090/targets

# 常见原因:

# 1. ServiceMonitor/PodMonitor配置错误

kubectl get servicemonitors -n monitoring

kubectl get podmonitors -n monitoring

# 2. 网络策略阻止

kubectl get networkpolicies -n monitoring

# 3. RBAC权限不足

kubectl describe clusterrolebinding prometheus-stack-kube-prom-prometheus

kubectl auth can-i list pods --as=system:serviceaccount:monitoring:prometheus-stack-kube-prom-prometheus

# 解决方案模板:

cat > debug-service-monitor.yaml << EOF

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: debug-service

namespace: monitoring

spec:

selector:

matchLabels:

app: my-app

endpoints:

- port: metrics

interval: 30s

path: /metrics

namespaceSelector:

matchNames:

- default

EOF

问题3:Grafana无法访问数据源

# 诊断步骤

# 1. 检查Grafana日志

kubectl logs deployment/prometheus-stack-grafana -n monitoring -f

# 2. 检查数据源配置

kubectl get configmaps -l grafana_datasource=1 -n monitoring -o yaml

# 3. 测试Prometheus连通性

kubectl exec -it deployment/prometheus-stack-grafana -n monitoring -- curl -v http://prometheus-stack-kube-prom-prometheus.monitoring.svc.cluster.local:9090/-/healthy

# 常见解决方案:

# A. 重新创建数据源ConfigMap

kubectl delete configmap grafana-datasource-prometheus -n monitoring

kubectl apply -f datasource-prometheus.yaml

# B. 重启Grafana

kubectl rollout restart deployment/prometheus-stack-grafana -n monitoring

问题4:Alertmanager不发送告警

# 诊断步骤

# 1. 检查Alertmanager配置

kubectl get secret alertmanager-config-override -n monitoring -o jsonpath='{.data.alertmanager.yml}' | base64 -d

# 2. 检查Alertmanager日志

kubectl logs statefulset/prometheus-stack-kube-prom-alertmanager -n monitoring -f

# 3. 测试邮件/SMTP配置

kubectl exec -it statefulset/prometheus-stack-kube-prom-alertmanager -n monitoring --

swaks --to test@example.com --from alerts@yourcompany.com --server smtp.gmail.com:587 -tls -au user@gmail.com -ap password

# 解决方案:

# A. 更新SMTP凭据

# B. 检查网络策略是否允许出站连接

# C. 验证接收器配置格式

问题5:内存/磁盘使用率过高

# 诊断工具脚本

cat > monitoring-resource-check.sh << 'EOF'

#!/bin/bash

echo "=== 监控系统资源检查 ==="

# 检查Prometheus内存使用

PROM_POD=$(kubectl get pods -n monitoring -l app=prometheus -o name | head -1)

echo "Prometheus内存使用:"

kubectl exec -n monitoring $PROM_POD -- wc -l /proc/$(pgrep prometheus)/status | grep VmRSS

# 检查磁盘使用

echo "PVC使用情况:"

kubectl get pvc -n monitoring -o json | jq -r '.items[] | "(.metadata.name): (.status.capacity.storage) allocated"'

# 检查Prometheus数据目录

echo "Prometheus数据大小:"

kubectl exec -n monitoring $PROM_POD -- du -sh /prometheus/wal /prometheus/chunks

# 清理建议

echo "清理建议:"

echo "1. 调整数据保留时间: helm upgrade --set prometheus.retention=15d"

echo "2. 清理旧数据: kubectl exec $PROM_POD -- promtool tsdb cleanup --max-time=30d"

echo "3. 增加存储: kubectl patch pvc <pvc-name> -p '{"spec":{"resources":{"requests":{"storage":"100Gi"}}}}'"

EOF

8.2 紧急恢复流程

#!/bin/bash

# emergency-recovery.sh

set -e

echo "=== 监控系统紧急恢复 ==="

echo "时间: $(date)"

echo

# 场景1:Prometheus数据损坏

if [ "$1" == "prometheus-corrupt" ]; then

echo "处理Prometheus数据损坏..."

# 1. 停止Prometheus

kubectl scale statefulset prometheus-stack-kube-prom-prometheus -n monitoring --replicas=0

# 2. 备份当前数据

PROM_POD=$(kubectl get pods -n monitoring -l app=prometheus -o name | head -1)

kubectl exec -n monitoring $PROM_POD -- tar czf /tmp/prometheus-backup-$(date +%s).tar.gz /prometheus

# 3. 清理数据目录

kubectl exec -n monitoring $PROM_POD -- rm -rf /prometheus/wal /prometheus/chunks-head /prometheus/chunks

# 4. 重启Prometheus

kubectl scale statefulset prometheus-stack-kube-prom-prometheus -n monitoring --replicas=1

echo "恢复完成,可能需要重新抓取历史数据"

fi

# 场景2:Grafana配置丢失

if [ "$1" == "grafana-lost" ]; then

echo "恢复Grafana配置..."

# 从备份恢复仪表板

BACKUP_FILE="/backup/monitoring/$(ls -t /backup/monitoring/ | head -1)/grafana-dashboards.yaml"

if [ -f "$BACKUP_FILE" ]; then

kubectl apply -f $BACKUP_FILE

kubectl rollout restart deployment/prometheus-stack-grafana -n monitoring

echo "Grafana配置已从备份恢复"

else

echo "找不到备份文件,使用默认配置"

# 重新应用初始配置

kubectl apply -f grafana-dashboards-cm.yaml

fi

fi

# 场景3:全部组件故障

if [ "$1" == "complete-failure" ]; then

echo "完全重建监控系统..."

read -p "确认要删除并重建监控系统?(yes/no): " CONFIRM

if [ "$CONFIRM" != "yes" ]; then

exit 1

fi

# 1. 备份配置

./backup-monitoring-config.sh

# 2. 删除Helm Release

helm uninstall prometheus-stack -n monitoring

# 3. 清理残留资源

kubectl delete pvc -n monitoring --all

kubectl delete configmaps -n monitoring -l release=prometheus-stack

# 4. 等待清理完成

sleep 30

# 5. 重新安装

helm install prometheus-stack prometheus-community/kube-prometheus-stack

--namespace monitoring

-f prometheus-stack-values.yaml

--wait

fi

9. 安全加固指南

9.1 基础安全配置

# security-hardening.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-security-config

namespace: monitoring

data:

prometheus.yml: |

global:

scrape_interval: 30s

scrape_timeout: 10s

evaluation_interval: 30s

# 禁用不必要的功能

enable_admin_api: false

web:

page_title: "Prometheus"

remote_write:

# 配置TLS

tls_config:

ca_file: /etc/prometheus/certs/ca.crt

cert_file: /etc/prometheus/certs/tls.crt

key_file: /etc/prometheus/certs/tls.key

# 配置安全头

web:

http_server_config:

http2: true

headers:

X-Frame-Options: DENY

X-Content-Type-Options: nosniff

X-XSS-Protection: "1; mode=block"

Content-Security-Policy: "default-src 'self'"

# 限制抓取目标

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

9.2 网络策略

# network-security-policy.yaml

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: prometheus-ingress-policy

namespace: monitoring

spec:

podSelector:

matchLabels:

app: prometheus

policyTypes:

- Ingress

ingress:

- from:

- namespaceSelector:

matchLabels:

name: monitoring

- podSelector:

matchLabels:

app: prometheus-operator

ports:

- protocol: TCP

port: 9090

---

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: grafana-ingress-policy

namespace: monitoring

spec:

podSelector:

matchLabels:

app.kubernetes.io/name: grafana

policyTypes:

- Ingress

ingress:

- from:

- ipBlock:

cidr: 10.0.0.0/8 # 限制内部网络访问

- namespaceSelector:

matchLabels:

name: ingress-nginx

ports:

- protocol: TCP

port: 3000

9.3 RBAC最小权限

# minimal-rbac.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus-minimal-role

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/metrics

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources:

- configmaps

verbs: ["get"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

10. 性能优化建议

10.1 Prometheus性能调优

# prometheus-optimization.yaml

prometheus:

prometheusSpec:

# 调整资源限制

resources:

requests:

memory: "4Gi"

cpu: "2"

limits:

memory: "8Gi"

cpu: "4"

# 优化配置

additionalArgs:

- "--storage.tsdb.retention.time=30d"

- "--storage.tsdb.retention.size=100GB"

- "--storage.tsdb.max-block-duration=2h"

- "--storage.tsdb.min-block-duration=2h"

- "--storage.tsdb.wal-compression"

- "--query.max-concurrency=40"

- "--query.timeout=2m"

- "--web.max-connections=512"

# 启用Thanos边车(大规模部署)

thanos:

image: quay.io/thanos/thanos:v0.28.0

objectStorageConfig:

key: thanos.yaml

name: thanos-objstore-config

10.2 抓取优化

# optimized-scraping.yaml

apiVersion: monitoring.coreos.com/v1

kind: Prometheus

metadata:

name: optimized

namespace: monitoring

spec:

scrapeInterval: 30s

evaluationInterval: 30s

externalLabels:

cluster: "production"

# 分批抓取

scrapeConfigs:

- job_name: 'high-frequency'

scrape_interval: 15s

static_configs:

- targets: ['critical-service:9090']

- job_name: 'low-frequency'

scrape_interval: 60s

static_configs:

- targets: ['batch-job:9090']

# 启用抓取分片

shards: 4

11. 官方参考链接

11.1 核心文档

Prometheus Operator GitHub: https://github.com/prometheus-operator/kube-prometheusPrometheus 官方文档: https://prometheus.io/docs/introduction/overview/Grafana 官方文档: https://grafana.com/docs/Alertmanager 配置: https://prometheus.io/docs/alerting/latest/configuration/Helm Charts 仓库: https://artifacthub.io/packages/helm/prometheus-community/kube-prometheus-stack

11.2 最佳实践

Prometheus 监控 Kubernetes 最佳实践: https://prometheus.io/docs/practices/naming/SRE 监控指南: https://sre.google/sre-book/monitoring-distributed-systems/Kubernetes 监控模式: https://kubernetes.io/docs/concepts/cluster-administration/monitoring/

11.3 仪表板资源

Grafana 官方仪表板: https://grafana.com/grafana/dashboards/Prometheus 告警规则示例: https://awesome-prometheus-alerts.grep.to/Kubernetes 监控仪表板集合: https://github.com/dotdc/grafana-dashboards-kubernetes

11.4 故障排除资源

Prometheus 故障排除: https://prometheus.io/docs/prometheus/latest/getting_started/Kubernetes 调试指南: https://kubernetes.io/docs/tasks/debug/Prometheus Operator 问题跟踪: https://github.com/prometheus-operator/prometheus-operator/issues

12. 附录

12.1 部署检查清单

环境前提验证通过 Helm 3已安装并配置 存储类可用且容量足够 网络策略允许必要通信 自定义values.yaml已根据环境调整 所有Pod正常启动并运行 数据源配置正确 仪表板成功导入 告警规则配置完成 告警通道测试通过 备份策略已实施 安全配置已加固 文档已更新

12.2 监控指标清单

| 类别 | 关键指标 | 告警阈值 | 检查频率 |

|---|---|---|---|

| 集群状态 | kube_node_status_condition | Ready≠true >5m | 30s |

| 节点资源 | node_memory_MemAvailable_bytes | <10% | 1m |

| Pod状态 | kube_pod_status_phase | Failed或Pending >5m | 30s |

| 工作负载 | kube_deployment_status_replicas_available | <期望值 | 1m |

| 存储 | kubelet_volume_stats_available_bytes | <10% | 5m |

| 网络 | node_network_receive_bytes_total | 突增>200% | 1m |

重要提示:

生产环境部署前,请在测试环境充分验证定期审查和更新告警规则监控系统自身需要被监控建立完善的变更管理和回滚流程确保所有操作符合安全合规要求

相关文章