目录

🐍 Python 爬虫实战:2025年最新全国行政区划代码抓取(解决反爬与动态加载)📅 项目背景🛠️ 技术栈与环境💡 核心功能实现1. 健壮的网络请求层(Session & Retry)2. 混合解析策略(正则大法好)3. 反反爬虫策略

📊 数据输出格式1. `administrative_divisions.csv`2. `administrative_divisions.json`

🚀 如何运行第一步:安装依赖第二步:运行脚本

完整代码📝 总结

专栏导读

🌸 欢迎来到Python办公自动化专栏—Python处理办公问题,解放您的双手

🏳️🌈 个人博客主页:请点击——> 个人的博客主页 求收藏

🏳️🌈 Github主页:请点击——> Github主页 求Star⭐

🏳️🌈 知乎主页:请点击——> 知乎主页 求关注

🏳️🌈 CSDN博客主页:请点击——> CSDN的博客主页 求关注

👍 该系列文章专栏:请点击——>Python办公自动化专栏 求订阅

🕷 此外还有爬虫专栏:请点击——>Python爬虫基础专栏 求订阅

📕 此外还有python基础专栏:请点击——>Python基础学习专栏 求订阅

文章作者技术和水平有限,如果文中出现错误,希望大家能指正🙏

❤️ 欢迎各位佬关注! ❤️

🐍 Python 爬虫实战:2025年最新全国行政区划代码抓取(解决反爬与动态加载)

摘要:本文详细介绍如何使用 Python 编写一个健壮的爬虫,从目标网站抓取中国最新的省、市、县三级行政区划代码。我们将重点攻克 SSL 验证错误、动态 JS 链接解析以及服务器反爬限制等技术难点,最终输出结构化的 CSV 和 JSON 数据。

📅 项目背景

在数据分析、物流配送、用户注册等场景中,一份最新、准确的**全国行政区划代码(省市区三级联动数据)**是必不可少的基础数据。虽然国家统计局每年会发布相关数据,但通过编程自动获取并整理成易用的格式(如 JSON/CSV)仍然是一个常见的技术需求。

本项目旨在解决以下核心问题:

数据完整性:覆盖全国所有省份(包括港澳台及新疆兵团等特殊区域)。层级关系:精确构建 省 -> 市 -> 县/区 的树状结构。技术攻坚:解决目标网站的 SSL 握手失败、动态 JavaScript 链接展开以及访问频率限制问题。

🛠️ 技术栈与环境

语言:Python 3.x核心库:

requests

re

csv

json

time

random

💡 核心功能实现

1. 健壮的网络请求层(Session & Retry)

在抓取过程中,我们遇到了

SSL: WRONG_VERSION_NUMBER

requests.get

Session

import requests

from requests.adapters import HTTPAdapter

from requests.packages.urllib3.util.retry import Retry

# 配置重试策略

session = requests.Session()

retries = Retry(

total=5,

backoff_factor=1,

status_forcelist=[500, 502, 503, 504]

)

adapter = HTTPAdapter(max_retries=retries)

# 挂载适配器,同时支持 HTTP 和 HTTPS

session.mount('http://', adapter)

session.mount('https://', adapter)

# 设置通用的 Headers 和 Cookies(模拟浏览器)

session.headers.update({

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64)...",

"Referer": "https://www.suchajun.com/..."

})

2. 混合解析策略(正则大法好)

目标网站的页面结构存在两种情况:

标准链接:普通的

<a>

href

javascript:void(0)

data-code

我们需要同时处理这两种情况:

# 模式1:标准链接匹配

pattern_level2_link = r'<div class="col"><a[^>]+href="([^"]+)"[^>]*>([^<]+)</a></div>s*<div class="col">(d+)</div>'

# 模式2:JS 动态链接匹配(关键!)

# 提取 data-code 属性,自行拼接 URL

pattern_level2_js = r'<div class="col">.*<a href="javascript:void(0);" class="city" data-code="(d+)">([^<]+)</a></div>'

# 逻辑判断

if matches_level2_link:

# 处理标准链接...

elif matches_level2_js:

# 处理 JS 链接,手动构造 URL

# link = f"{base_url}/richang/xingzhengquhuadaima/{code}"

3. 反反爬虫策略

为了避免被服务器识别为机器人并封禁 IP,我们采取了“慢即是快”的策略:

随机延迟:每次请求前随机休眠

3.0

6.0

strip()

rstrip(':')

📊 数据输出格式

脚本运行完成后,会生成两个文件:

1.

administrative_divisions.csv

administrative_divisions.csv

适合导入数据库或 Excel 分析,包含父子级联关系。

| Level | Name | Code | Link | Parent Code |

|---|---|---|---|---|

| 1 | 辽宁省 | 210000 | …/210000 | |

| 2 | 沈阳市 | 210100 | …/210100 | 210000 |

| 3 | 和平区 | 210102 | …/210102 | 210100 |

2.

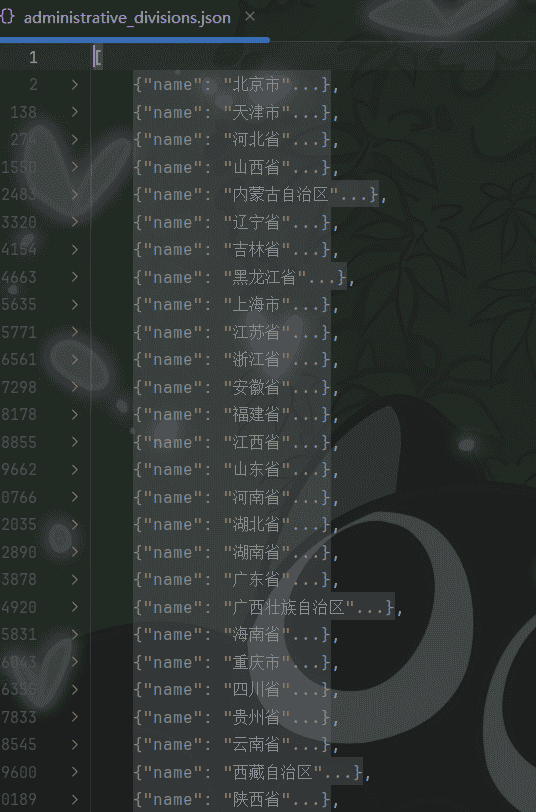

administrative_divisions.json

administrative_divisions.json

树状结构,适合前端组件(如级联选择器 Cascader)直接使用。

[

{

"name": "辽宁省",

"code": "210000",

"level": 1,

"children": [

{

"name": "沈阳市",

"code": "210100",

"level": 2,

"children": [

{

"name": "和平区",

"code": "210102",

"level": 3

}

// ... 更多区县

]

}

]

}

]

🚀 如何运行

第一步:安装依赖

确保目录下有

requirements.txt

pip install -r requirements.txt

第二步:运行脚本

python main.py

注意:由于设置了较长的随机延迟以保护账号和 IP,抓取全国完整数据可能需要 30 分钟以上。请耐心等待,或在代码中修改

target_provinces

完整代码

import requests

import re

import time

import csv

import json

import random

from requests.adapters import HTTPAdapter

from requests.packages.urllib3.util.retry import Retry

headers = {

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.7",

"Accept-Language": "zh-CN,zh;q=0.9,en;q=0.8,en-GB;q=0.7,en-US;q=0.6",

"Connection": "keep-alive",

"Referer": "https://www.suchajun.com/richang/xingzhengquhuadaima?q=",

"Sec-Fetch-Dest": "document",

"Sec-Fetch-Mode": "navigate",

"Sec-Fetch-Site": "same-origin",

"Sec-Fetch-User": "?1",

"Upgrade-Insecure-Requests": "1",

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/143.0.0.0 Safari/537.36 Edg/143.0.0.0",

"sec-ch-ua": ""Microsoft Edge";v="143", "Chromium";v="143", "Not A(Brand";v="24"",

"sec-ch-ua-mobile": "?0",

"sec-ch-ua-platform": ""Windows""

}

cookies = {

"scjuid": "dqc5vpl99gq4s8ko8rbbqfog4k",

"_csrf": "d7f8fe71429999f110e724cce45daaacf8c8192702c42a3425ff4de1a6704574a:2:%7Bi:0%3Bs:5:%22_csrf%22%3Bi:1%3Bs:32:%22JAto3-BzNnqqna-wwKcAd40HIQAbRSgF%22%3B%7D"

}

url = "https://www.suchajun.com/richang/xingzhengquhuadaima"

params = {

"q": ""

}

requests.packages.urllib3.disable_warnings()

base_url = "https://www.suchajun.com"

# Setup Session with retries

session = requests.Session()

retries = Retry(total=5, backoff_factor=1, status_forcelist=[500, 502, 503, 504])

adapter = HTTPAdapter(max_retries=retries)

session.mount('http://', adapter)

session.mount('https://', adapter)

session.headers.update(headers)

session.cookies.update(cookies)

def get_data(url):

try:

# Random delay before request to behave more like a human

time.sleep(random.uniform(3.0, 6.0))

response = session.get(url, verify=False, timeout=20)

response.encoding = 'utf-8'

# Check if redirected to verification page

if 'verify' in response.url or '39.97.5.232' in response.url:

print(f"Warning: Redirected to verification page for {url}")

return ""

return response.text

except Exception as e:

print(f"Error fetching {url}: {e}")

# If error occurs (like the SSL verification redirect), wait longer and return empty

time.sleep(10)

return ""

print("Fetching Level 1...")

response_text = get_data(url)

# Extract data

pattern_level1 = r'<td><a href="([^"]+)">([^<]+)</a></td>s*<td>(d+)</td>'

# Pattern for direct links (like Beijing -> Dongcheng)

pattern_level2_link = r'<div class="col"><a[^>]+href="([^"]+)"[^>]*>([^<]+)</a></div>s*<div class="col">(d+)</div>'

# Pattern for JS expandable links (like Hebei -> Shijiazhuang)

# <div class="col"><span>+</span><a href="javascript:void(0);" class="city" data-code="130100">石家庄市</a></div>

pattern_level2_js = r'<div class="col">.*<a href="javascript:void(0);" class="city" data-code="(d+)">([^<]+)</a></div>'

# Pattern for Level 3 inside the expandable div (Shijiazhuang -> Changan)

# <div class="col"> <a href="/richang/xingzhengquhuadaima/130102">长安区</a></div>

pattern_level3 = r'<div class="col"> <a href="([^"]+)">([^<]+)</a></div>s*<div class="col">(d+)</div>'

matches = re.findall(pattern_level1, response_text)

results = []

print("行政区划名称|行政区划代码|二级链接")

# target_provinces = ["辽宁省", "福建省", "广西壮族自治区", "海南省", "甘肃省", "青海省"]

target_provinces = [] # Process all

for link, name, code in matches:

if target_provinces and name not in target_provinces:

continue

link = link.strip().rstrip(':') # Fix: Remove trailing colon and whitespace

full_link = base_url + link

print(f"{name}|{code}|{full_link}")

level1_data = {

"name": name,

"code": code,

"link": full_link,

"level": 1,

"children": []

}

results.append(level1_data)

# Fetch Level 2

time.sleep(0.5) # Add delay to avoid being blocked

level2_text = get_data(full_link)

# Try finding standard links first (like Beijing)

matches_level2 = re.findall(pattern_level2_link, level2_text)

if matches_level2:

for l2_link, l2_name, l2_code in matches_level2:

if l2_code == code:

continue # Skip self

l2_full_link = base_url + l2_link

print(f" {l2_name}|{l2_code}|{l2_full_link}")

level2_data = {

"name": l2_name,

"code": l2_code,

"link": l2_full_link,

"level": 2,

"parent_code": code,

"children": []

}

level1_data["children"].append(level2_data)

# Try finding JS expandable links (like Hebei)

matches_level2_js = re.findall(pattern_level2_js, level2_text)

if matches_level2_js:

for l2_code, l2_name in matches_level2_js:

l2_full_link = f"{base_url}/richang/xingzhengquhuadaima/{l2_code}"

print(f" {l2_name}|{l2_code}|{l2_full_link}")

level2_data = {

"name": l2_name,

"code": l2_code,

"link": l2_full_link,

"level": 2,

"parent_code": code,

"children": []

}

level1_data["children"].append(level2_data)

# Extract children (Level 3) for this city

# We look for the div with

# Since we don't have a robust HTML parser, we'll try to capture the content until the next div class="row" that is NOT part of this block,

# OR just look for pattern_level3 inside the specific chunk.

# Better way: split the text by 'id="county-'

# But let's try a regex that matches the div content.

# <div class="county m-3 mt-0 mb-0"> ... content ... </div>

# The content contains multiple <div class="row ..."> ... </div>

# Let's find the start index

start_marker = f'id="county-{l2_code}"'

start_pos = level2_text.find(start_marker)

if start_pos != -1:

# Find the end of this div. It's risky without counting braces.

# However, the structure seems consistent.

# Let's just search for pattern_level3 starting from start_pos

# until we hit something that looks like the start of another city or end of container.

# Let's limit the search range to, say, 10000 chars or until next 'id="county-'

remaining_text = level2_text[start_pos:]

next_marker_pos = remaining_text.find('id="county-', 1) # Find next county block

if next_marker_pos != -1:

block_text = remaining_text[:next_marker_pos]

else:

block_text = remaining_text

matches_l3 = re.findall(pattern_level3, block_text)

for l3_link, l3_name, l3_code in matches_l3:

l3_full_link = base_url + l3_link

print(f" {l3_name}|{l3_code}|{l3_full_link}")

level3_data = {

"name": l3_name,

"code": l3_code,

"link": l3_full_link,

"level": 3,

"parent_code": l2_code

}

level2_data["children"].append(level3_data)

# Save to JSON

with open('administrative_divisions.json', 'w', encoding='utf-8') as f:

json.dump(results, f, ensure_ascii=False, indent=4)

print("Saved to administrative_divisions.json")

# Save to CSV

with open('administrative_divisions.csv', 'w', newline='', encoding='utf-8') as f:

writer = csv.writer(f)

writer.writerow(['Level', 'Name', 'Code', 'Link', 'Parent Code'])

def write_node(node, parent_code=""):

writer.writerow([node['level'], node['name'], node['code'], node['link'], parent_code])

if 'children' in node:

for child in node['children']:

write_node(child, node['code'])

for item in results:

write_node(item)

print("Saved to administrative_divisions.csv")

📝 总结

通过本次实战,我们不仅获取了有价值的数据,更重要的是实践了处理复杂网络环境和非标准 HTML 结构的技巧。特别是 Regex 处理 JS 动态数据 和 Session 重试机制,是编写高可用爬虫的必备技能。

希望这篇文章对你有所帮助!如果代码运行遇到问题,欢迎留言交流。

结尾

希望对初学者有帮助;致力于办公自动化的小小程序员一枚

希望能得到大家的【❤️一个免费关注❤️】感谢!

求个 🤞 关注 🤞 +❤️ 喜欢 ❤️ +👍 收藏 👍

此外还有办公自动化专栏,欢迎大家订阅:Python办公自动化专栏

此外还有爬虫专栏,欢迎大家订阅:Python爬虫基础专栏

此外还有Python基础专栏,欢迎大家订阅:Python基础学习专栏

相关文章