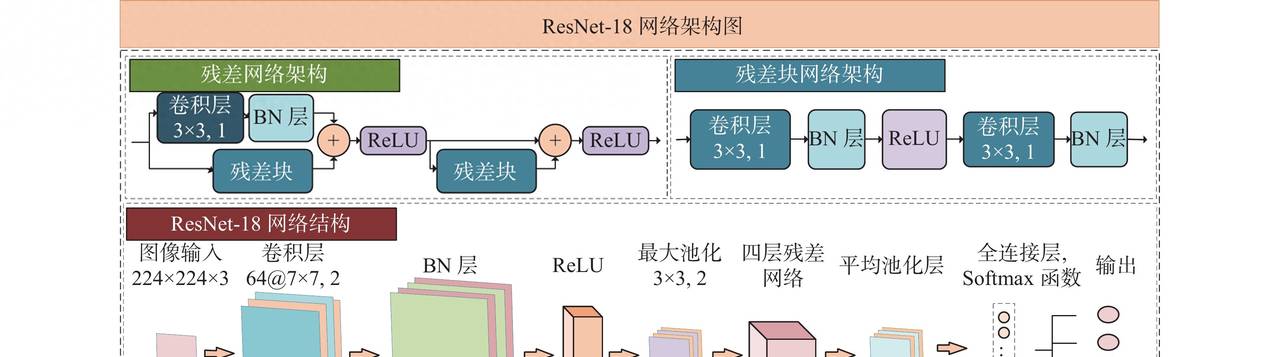

一 用pytorch构建resnet18网络,并训练导出onnx格式

import os

import torchvision

import torch

import torch.nn as nn

#device=torch.device("cuda" if torch.cuda.is_available() else "cpu")

device=torch.device("cpu")

# Transform configuration and data augmentation

transform_train=torchvision.transforms.Compose([

torchvision.transforms.Pad(4),

torchvision.transforms.RandomHorizontalFlip(), #图像一半的概率翻转,一半的概率不翻转

torchvision.transforms.RandomCrop(32), #图像随机裁剪成32*32

# torchvision.transforms.RandomVerticalFlip(),

# torchvision.transforms.RandomRotation(15),

torchvision.transforms.ToTensor(), #转为Tensor ,归一化

torchvision.transforms.Normalize([0.5,0.5,0.5], [0.5,0.5,0.5])

#torchvision.transforms.Normalize((0.4914, 0.4822, 0.4465),(0.2023, 0.1994, 0.2010))

])

transform_test=torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize([0.5,0.5,0.5], [0.5,0.5,0.5])

#torchvision.transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])

# epoch时才对数据集进行以上数据增强操作

num_classes=10

batch_size=128

learning_rate=0.001

num_epoches=2

classes = ("plane","car","bird","cat","deer","dog","frog","horse","ship","truck")

# load downloaded dataset

train_dataset = torchvision.datasets.CIFAR10('./data', download=True, train=True, transform=transform_train)

test_dataset = torchvision.datasets.CIFAR10('./data', download=True, train=False, transform=transform_test)

# Data loader

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = torch.utils.data.DataLoader(test_dataset, batch_size=batch_size, shuffle=False)

# Define 3*3 convolutional neural network

def conv3x3(in_channels, out_channels, stride=1):

return nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1, bias=False)

class ResidualBlock(nn.Module):

def __init__(self, in_channels, out_channels, stride=1, downsample=None):

super(ResidualBlock, self).__init__()

self.conv1 = conv3x3(in_channels, out_channels, stride)

self.bn1 = nn.BatchNorm2d(out_channels)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(out_channels, out_channels)

self.bn2 = nn.BatchNorm2d(out_channels)

self.downsample = downsample

def forward(self, x):

residual=x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if(self.downsample):

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

# 自定义一个神经网络,使用nn.model,,通过__init__初始化每一层神经网络。

# 使用forward连接数据

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes):

super(ResNet, self).__init__()

self.in_channels = 16

self.conv = conv3x3(3, 16)

self.bn = torch.nn.BatchNorm2d(16)

self.relu = torch.nn.ReLU(inplace=True)

self.layer1 = self._make_layers(block, 16, layers[0])

self.layer2 = self._make_layers(block, 32, layers[1], 2)

self.layer3 = self._make_layers(block, 64, layers[2], 2)

self.layer4 = self._make_layers(block, 128, layers[3], 2)

self.avg_pool = torch.nn.AdaptiveAvgPool2d((1, 1))

self.fc = torch.nn.Linear(128, num_classes)

def _make_layers(self, block, out_channels, blocks, stride=1):

downsample = None

if (stride != 1) or (self.in_channels != out_channels):

downsample = torch.nn.Sequential(

conv3x3(self.in_channels, out_channels, stride=stride),

torch.nn.BatchNorm2d(out_channels))

layers = []

layers.append(block(self.in_channels, out_channels, stride, downsample))

self.in_channels = out_channels

for i in range(1, blocks):

layers.append(block(out_channels, out_channels))

return torch.nn.Sequential(*layers)

def forward(self, x):

out = self.conv(x)

out = self.bn(out)

out = self.relu(out)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = self.avg_pool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

# Make model,使用cpu

model=ResNet(ResidualBlock, [2,2,2,2], num_classes).to(device=device)

# 打印model结构

# print(f"Model structure: {model}

")

# 优化器和损失函数

criterion = nn.CrossEntropyLoss() #交叉熵损失函数

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate) #优化器随机梯度下降

if __name__ == "__main__":

# Train the model

total_step = len(train_loader)

for epoch in range(0,num_epoches):

for i, (images, labels) in enumerate(train_loader):

images = images.to(device=device)

labels = labels.to(device=device)

# Forward pass

outputs = model(images)

loss = criterion(outputs, labels)

# Backward and optimize

optimizer.zero_grad()

# 反向传播

loss.backward()

# 更新参数

optimizer.step()

#sum_loss += loss.item()

#_, predicted = torch.max(outputs.data, dim=1)

#total += labels.size(0)

#correct += predicted.eq(labels.data).cpu().sum()

if (i+1) % total_step == 0:

print('Epoch [{}/{}], Step [{}/{}], Loss: {:.4f}'

.format(epoch+1, num_epoches, i+1, total_step, loss.item()))

print("Finished Tranining")

# 保存权重文件

#torch.save(model.state_dict(), 'model_weights.pth')

#torch.save(model, 'model.pt')

print('

Test the model')

model.eval()

with torch.no_grad():

correct = 0

total = 0

for images, labels in test_loader:

images = images.to(device=device)

labels = labels.to(device=device)

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('在10000张测试集图片上的准确率:{:.4f} %'.format(100 * correct / total))

# 导出onnx模型

x = torch.randn((1, 3, 32, 32))

torch.onnx.export(model, x, './resnet18.onnx', opset_version=12, input_names=['input'], output_names=['output'])

为了尽快演示效果,num_epoches设置了2。运行结果一般,生成onnx模型。

Epoch [1/2], Step [391/391], Loss: 1.0813Epoch

[2/2], Step [391/391], Loss: 0.8880Finished Tranining

Test the model在10000张测试集图片上的准确率:65.7200 %

D:code28

esnet18

esnet18.py:164: DeprecationWarning: You are using the legacy TorchScript-based ONNX export. Starting in PyTorch 2.9, the new torch.export-based ONNX exporter will be the default. To switch now, set dynamo=True in torch.onnx.export. This new exporter supports features like exporting LLMs with DynamicCache. We encourage you to try it and share feedback to help improve the experience. Learn more about the new export logic: https://pytorch.org/docs/stable/onnx_dynamo.html. For exporting control flow: https://pytorch.org/tutorials/beginner/onnx/export_control_flow_model_to_onnx_tutorial.html. torch.onnx.export(model, x, './resnet18.onnx', opset_version=12, input_names=['input'], output_names=['output'])二 转换可部署于rk3588的rknn格式

import numpy as np

import cv2

from rknn.api import RKNN

import torchvision.models as models

import torch

import os

def softmax(x):

return np.exp(x)/sum(np.exp(x))

def torch_version():

import torch

torch_ver = torch.__version__.split('.')

torch_ver[2] = torch_ver[2].split('+')[0]

return [int(v) for v in torch_ver]

if __name__ == '__main__':

if torch_version() < [1, 9, 0]:

import torch

print("Your torch version is '{}', in order to better support the Quantization Aware Training (QAT) model,

"

"Please update the torch version to '1.9.0' or higher!".format(torch.__version__))

exit(0)

MODEL = './resnet18.onnx'

# Create RKNN object

rknn = RKNN(verbose=True)

# Pre-process config

print('--> Config model')

rknn.config(mean_values=[127.5, 127.5, 127.5], std_values=[255, 255, 255], target_platform='rk3588')

#rknn.config(mean_values=[123.675, 116.28, 103.53], std_values=[58.395, 58.395, 58.395], target_platform='rk3568')

#rknn.config(mean_values=[125.307, 122.961, 113.8575], std_values=[51.5865, 50.847, 51.255], target_platform='rk3568')

print('done')

# Load model

print('--> Loading model')

#ret = rknn.load_pytorch(model=model, input_size_list=input_size_list)

ret = rknn.load_onnx(model=MODEL)

if ret != 0:

print('Load model failed!')

exit(ret)

print('done')

# Build model

print('--> Building model')

ret = rknn.build(do_quantization=False)

if ret != 0:

print('Build model failed!')

exit(ret)

print('done')

# Export rknn model

print('--> Export rknn model')

ret = rknn.export_rknn('./resnet_18_100.rknn')

if ret != 0:

print('Export rknn model failed!')

exit(ret)

print('done')

# Set inputs

img = cv2.imread('./0_125.jpg')

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

img = cv2.resize(img,(32,32))

#img = np.expand_dims(img, 0)

# Init runtime environment

print('--> Init runtime environment')

ret = rknn.init_runtime()

if ret != 0:

print('Init runtime environment failed!')

exit(ret)

print('done')

# Inference

print('--> Running model')

outputs = rknn.inference(inputs=[img])

np.save('./pytorch_resnet18_qat_0.npy', outputs[0])

#show_outputs(softmax(np.array(outputs[0][0])))

print(outputs)

print('done')

rknn.release()

执行过程

I rknn-toolkit2 version: 2.3.2

--> Config model

done

--> Loading model

I Loading : 100%|████████████████████████████████████████████████| 42/42 [00:00<00:00, 14642.24it/s]

done

--> Building model

D base_optimize ...

D base_optimize done.

D

D fold_constant ...

D fold_constant done.

D

D correct_ops ...

D correct_ops done.

D

D fuse_ops ...

D fuse_ops results:

D convert_global_avgpool_to_conv: remove node = ['/avg_pool/GlobalAveragePool'], add node = ['/avg_pool/GlobalAveragePool_2conv0']

D convert_gemm_by_exmatmul: remove node = ['/fc/Gemm'], add node = ['/Reshape_output_0_tp', '/Reshape_output_0_tp_rs', '/fc/Gemm#1', 'output_mm_tp', 'output_mm_tp_rs']

D unsqueeze_to_4d_transpose: remove node = [], add node = ['/Reshape_output_0_rs', '/Reshape_output_0_tp-rs']

D bypass_two_reshape: remove node = ['/Reshape_output_0_rs', '/Reshape']

D fuse_transpose_reshape: remove node = ['/Reshape_output_0_tp', 'output_mm_tp']

D bypass_two_reshape: remove node = ['/Reshape_output_0_tp_rs', '/Reshape_output_0_tp-rs']

D convert_exmatmul_to_conv: remove node = ['/fc/Gemm#1'], add node = ['/fc/Gemm#2']

D fold_constant ...

D fold_constant done.

D fuse_ops done.

D

D sparse_weight ...

D sparse_weight done.

D

I rknn building ...

I RKNN: [10:16:04.731] compress = 0, conv_eltwise_activation_fuse = 1, global_fuse = 1, multi-core-model-mode = 7, output_optimize = 1, layout_match = 1, enable_argb_group = 0, op_group_sram_opt = 0, enable_flash_attention = 0, op_group_nbuf_opt = 0, safe_fuse = 0

I RKNN: librknnc version: 2.3.2 (@2025-04-03T08:30:46)

D RKNN: [10:16:04.748] RKNN is invoked

D RKNN: [10:16:04.810] >>>>>> start: rknn::RKNNExtractCustomOpAttrs

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNExtractCustomOpAttrs

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNSetOpTargetPass

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNSetOpTargetPass

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNBindNorm

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNBindNorm

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNEliminateQATDataConvert

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNEliminateQATDataConvert

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNConvStrideFixPass

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNConvStrideFixPass

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNTileGroupConv

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNTileGroupConv

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNAddConvBias

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNAddConvBias

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNTileChannel

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNTileChannel

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNDuplicateWeightPass

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNDuplicateWeightPass

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNInitRNNConst

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNInitRNNConst

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNPerChannelPrep

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNPerChannelPrep

D RKNN: [10:16:04.811] >>>>>> start: rknn::RKNNBnQuant

D RKNN: [10:16:04.811] <<<<<<<< end: rknn::RKNNBnQuant

D RKNN: [10:16:04.812] >>>>>> start: rknn::RKNNFuseOptimizerPass

D RKNN: [10:16:04.814] <<<<<<<< end: rknn::RKNNFuseOptimizerPass

D RKNN: [10:16:04.814] >>>>>> start: rknn::RKNNTurnAutoPad

D RKNN: [10:16:04.814] <<<<<<<< end: rknn::RKNNTurnAutoPad

D RKNN: [10:16:04.814] >>>>>> start: rknn::RKNNInitCastConst

D RKNN: [10:16:04.814] <<<<<<<< end: rknn::RKNNInitCastConst

D RKNN: [10:16:04.814] >>>>>> start: rknn::RKNNMultiSurfacePass

D RKNN: [10:16:04.814] <<<<<<<< end: rknn::RKNNMultiSurfacePass

D RKNN: [10:16:04.815] >>>>>> start: rknn::RKNNReplaceConstantTensorPass

D RKNN: [10:16:04.815] <<<<<<<< end: rknn::RKNNReplaceConstantTensorPass

D RKNN: [10:16:04.815] >>>>>> start: rknn::RKNNSubgraphManager

D RKNN: [10:16:04.815] <<<<<<<< end: rknn::RKNNSubgraphManager

D RKNN: [10:16:04.815] >>>>>> start: OpEmit

D RKNN: [10:16:04.815] <<<<<<<< end: OpEmit

D RKNN: [10:16:04.815] >>>>>> start: rknn::RKNNAddFirstConv

D RKNN: [10:16:04.815] <<<<<<<< end: rknn::RKNNAddFirstConv

D RKNN: [10:16:04.815] >>>>>> start: rknn::RKNNTilingPass

D RKNN: [10:16:04.816] <<<<<<<< end: rknn::RKNNTilingPass

D RKNN: [10:16:04.816] >>>>>> start: rknn::RKNNLayoutMatchPass

D RKNN: [10:16:04.816] <<<<<<<< end: rknn::RKNNLayoutMatchPass

D RKNN: [10:16:04.816] >>>>>> start: rknn::RKNNAddSecondaryNode

D RKNN: [10:16:04.816] <<<<<<<< end: rknn::RKNNAddSecondaryNode

D RKNN: [10:16:04.817] >>>>>> start: rknn::RKNNAllocateConvCachePass

D RKNN: [10:16:04.817] <<<<<<<< end: rknn::RKNNAllocateConvCachePass

D RKNN: [10:16:04.817] >>>>>> start: OpEmit

D RKNN: [10:16:04.820] <<<<<<<< end: OpEmit

D RKNN: [10:16:04.820] >>>>>> start: rknn::RKNNSubGraphMemoryPlanPass

D RKNN: [10:16:04.820] <<<<<<<< end: rknn::RKNNSubGraphMemoryPlanPass

D RKNN: [10:16:04.820] >>>>>> start: rknn::RKNNProfileAnalysisPass

D RKNN: [10:16:04.820] <<<<<<<< end: rknn::RKNNProfileAnalysisPass

D RKNN: [10:16:04.821] >>>>>> start: rknn::RKNNOperatorIdGenPass

D RKNN: [10:16:04.821] <<<<<<<< end: rknn::RKNNOperatorIdGenPass

D RKNN: [10:16:04.821] >>>>>> start: rknn::RKNNWeightTransposePass

D RKNN: [10:16:04.827] <<<<<<<< end: rknn::RKNNWeightTransposePass

D RKNN: [10:16:04.827] >>>>>> start: rknn::RKNNCPUWeightTransposePass

D RKNN: [10:16:04.827] <<<<<<<< end: rknn::RKNNCPUWeightTransposePass

D RKNN: [10:16:04.827] >>>>>> start: rknn::RKNNModelBuildPass

E RKNN: [10:16:04.843] Unkown op target: 0

D RKNN: [10:16:04.843] <<<<<<<< end: rknn::RKNNModelBuildPass

D RKNN: [10:16:04.843] >>>>>> start: rknn::RKNNModelRegCmdbuildPass

D RKNN: [10:16:04.849] --------------------------------------------------------------------------------------------------------------------------------------------------------------------------

D RKNN: [10:16:04.849] Network Layer Information Table

D RKNN: [10:16:04.849] --------------------------------------------------------------------------------------------------------------------------------------------------------------------------

D RKNN: [10:16:04.849] ID OpType DataType Target InputShape OutputShape Cycles(DDR/NPU/Total) RW(KB) FullName

D RKNN: [10:16:04.849] --------------------------------------------------------------------------------------------------------------------------------------------------------------------------

D RKNN: [10:16:04.849] 0 InputOperator FLOAT16 CPU (1,3,32,32) 0/0/0 0 InputOperator:input

D RKNN: [10:16:04.849] 1 ConvRelu FLOAT16 NPU (1,3,32,32),(16,3,3,3),(16) (1,16,32,32) 1746/9216/9216 8 Conv:/conv/Conv

D RKNN: [10:16:04.849] 2 ConvRelu FLOAT16 NPU (1,16,32,32),(16,16,3,3),(16) (1,16,32,32) 2969/9216/9216 36 Conv:/layer1/layer1.0/conv1/Conv

D RKNN: [10:16:04.849] 3 ConvAddRelu FLOAT16 NPU (1,16,32,32),(16,16,3,3),(16),... (1,16,32,32) 4354/9216/9216 68 Conv:/layer1/layer1.0/conv2/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 4 ConvRelu FLOAT16 NPU (1,16,32,32),(16,16,3,3),(16) (1,16,32,32) 2969/9216/9216 36 Conv:/layer1/layer1.1/conv1/Conv

D RKNN: [10:16:04.849] 5 ConvAddRelu FLOAT16 NPU (1,16,32,32),(16,16,3,3),(16),... (1,16,32,32) 4354/9216/9216 68 Conv:/layer1/layer1.1/conv2/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 6 ConvRelu FLOAT16 NPU (1,16,32,32),(32,16,3,3),(32) (1,32,16,16) 2473/4608/4608 41 Conv:/layer2/layer2.0/conv1/Conv

D RKNN: [10:16:04.849] 7 Conv FLOAT16 NPU (1,32,16,16),(32,32,3,3),(32) (1,32,16,16) 2170/4608/4608 34 Conv:/layer2/layer2.0/conv2/Conv

D RKNN: [10:16:04.849] 8 ConvAddRelu FLOAT16 NPU (1,16,32,32),(32,16,3,3),(32),... (1,32,16,16) 3166/4608/4608 57 Conv:/layer2/layer2.0/downsample/downsample.0/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 9 ConvRelu FLOAT16 NPU (1,32,16,16),(32,32,3,3),(32) (1,32,16,16) 2170/4608/4608 34 Conv:/layer2/layer2.1/conv1/Conv

D RKNN: [10:16:04.849] 10 ConvAddRelu FLOAT16 NPU (1,32,16,16),(32,32,3,3),(32),... (1,32,16,16) 2863/4608/4608 50 Conv:/layer2/layer2.1/conv2/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 11 ConvRelu FLOAT16 NPU (1,32,16,16),(64,32,3,3),(64) (1,64,8,8) 2609/2304/2609 52 Conv:/layer3/layer3.0/conv1/Conv

D RKNN: [10:16:04.849] 12 Conv FLOAT16 NPU (1,64,8,8),(64,64,3,3),(64) (1,64,8,8) 3821/4608/4608 80 Conv:/layer3/layer3.0/conv2/Conv

D RKNN: [10:16:04.849] 13 ConvAddRelu FLOAT16 NPU (1,32,16,16),(64,32,3,3),(64),... (1,64,8,8) 2955/2304/2955 60 Conv:/layer3/layer3.0/downsample/downsample.0/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 14 ConvRelu FLOAT16 NPU (1,64,8,8),(64,64,3,3),(64) (1,64,8,8) 3821/4608/4608 80 Conv:/layer3/layer3.1/conv1/Conv

D RKNN: [10:16:04.849] 15 ConvAddRelu FLOAT16 NPU (1,64,8,8),(64,64,3,3),(64),... (1,64,8,8) 4167/4608/4608 88 Conv:/layer3/layer3.1/conv2/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 16 ConvRelu FLOAT16 NPU (1,64,8,8),(128,64,3,3),(128) (1,128,4,4) 6776/2304/6776 152 Conv:/layer4/layer4.0/conv1/Conv

D RKNN: [10:16:04.849] 17 Conv FLOAT16 NPU (1,128,4,4),(128,128,3,3),(128) (1,128,4,4) 12836/4608/12836 292 Conv:/layer4/layer4.0/conv2/Conv

D RKNN: [10:16:04.849] 18 ConvAddRelu FLOAT16 NPU (1,64,8,8),(128,64,3,3),(128),... (1,128,4,4) 6949/2304/6949 156 Conv:/layer4/layer4.0/downsample/downsample.0/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 19 ConvRelu FLOAT16 NPU (1,128,4,4),(128,128,3,3),(128) (1,128,4,4) 12836/4608/12836 292 Conv:/layer4/layer4.1/conv1/Conv

D RKNN: [10:16:04.849] 20 ConvAddRelu FLOAT16 NPU (1,128,4,4),(128,128,3,3),(128),... (1,128,4,4) 13009/4608/13009 296 Conv:/layer4/layer4.1/conv2/Conv_ConvAddRelu

D RKNN: [10:16:04.849] 21 Conv FLOAT16 NPU (1,128,4,4),(1,128,4,4),(128) (1,128,1,1) 379/512/512 8 Conv:/avg_pool/GlobalAveragePool_2conv0

D RKNN: [10:16:04.849] 22 Conv FLOAT16 NPU (1,128,1,1),(10,128,1,1),(10) (1,10,1,1) 124/64/124 2 Conv:/fc/Gemm#2

D RKNN: [10:16:04.849] 23 Reshape FLOAT16 CPU (1,10,1,1),(2) (1,10) 0/0/0 0 Reshape:output_mm_tp_rs

D RKNN: [10:16:04.849] 24 OutputOperator FLOAT16 CPU (1,10) 0/0/0 0 OutputOperator:output

D RKNN: [10:16:04.849] --------------------------------------------------------------------------------------------------------------------------------------------------------------------------

D RKNN: [10:16:04.850] <<<<<<<< end: rknn::RKNNModelRegCmdbuildPass

D RKNN: [10:16:04.850] >>>>>> start: rknn::RKNNModelExportPass

D RKNN: [10:16:04.850] Export RKNN model to /tmp/tmphkipd27f/check.rknn

D RKNN: [10:16:04.856] <<<<<<<< end: rknn::RKNNModelExportPass

D RKNN: [10:16:04.856] >>>>>> start: rknn::RKNNMemStatisticsPass

D RKNN: [10:16:04.857] ---------------------------------------------------------------------------------------------------------------------------------------------

D RKNN: [10:16:04.857] Feature Tensor Information Table

D RKNN: [10:16:04.857] -----------------------------------------------------------------------------------------------------------+---------------------------------

D RKNN: [10:16:04.857] ID User Tensor DataType DataFormat OrigShape NativeShape | [Start End) Size

D RKNN: [10:16:04.857] -----------------------------------------------------------------------------------------------------------+---------------------------------

D RKNN: [10:16:04.857] 1 ConvRelu input FLOAT16 NC1HWC2 (1,3,32,32) (1,4,32,32,3) | 0x00000000 0x00001800 0x00001800

D RKNN: [10:16:04.857] 2 ConvRelu /relu/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00001800 0x00009800 0x00008000

D RKNN: [10:16:04.857] 3 ConvAddRelu /layer1/layer1.0/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00009800 0x00011800 0x00008000

D RKNN: [10:16:04.857] 3 ConvAddRelu /relu/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00001800 0x00009800 0x00008000

D RKNN: [10:16:04.857] 4 ConvRelu /layer1/layer1.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00011800*0x00019800 0x00008000

D RKNN: [10:16:04.857] 5 ConvAddRelu /layer1/layer1.1/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00000000 0x00008000 0x00008000

D RKNN: [10:16:04.857] 5 ConvAddRelu /layer1/layer1.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00011800*0x00019800 0x00008000

D RKNN: [10:16:04.857] 6 ConvRelu /layer1/layer1.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00008000 0x00010000 0x00008000

D RKNN: [10:16:04.857] 7 Conv /layer2/layer2.0/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00000000 0x00004000 0x00004000

D RKNN: [10:16:04.857] 8 ConvAddRelu /layer1/layer1.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,16,32,32) (1,8,32,32,8) | 0x00008000 0x00010000 0x00008000

D RKNN: [10:16:04.857] 8 ConvAddRelu /layer2/layer2.0/conv2/Conv_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00004000 0x00008000 0x00004000

D RKNN: [10:16:04.857] 9 ConvRelu /layer2/layer2.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00000000 0x00004000 0x00004000

D RKNN: [10:16:04.857] 10 ConvAddRelu /layer2/layer2.1/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00004000 0x00008000 0x00004000

D RKNN: [10:16:04.857] 10 ConvAddRelu /layer2/layer2.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00000000 0x00004000 0x00004000

D RKNN: [10:16:04.857] 11 ConvRelu /layer2/layer2.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00008000 0x0000c000 0x00004000

D RKNN: [10:16:04.857] 12 Conv /layer3/layer3.0/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00000000 0x00002000 0x00002000

D RKNN: [10:16:04.857] 13 ConvAddRelu /layer2/layer2.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,32,16,16) (1,16,16,16,8) | 0x00008000 0x0000c000 0x00004000

D RKNN: [10:16:04.857] 13 ConvAddRelu /layer3/layer3.0/conv2/Conv_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00002000 0x00004000 0x00002000

D RKNN: [10:16:04.857] 14 ConvRelu /layer3/layer3.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00000000 0x00002000 0x00002000

D RKNN: [10:16:04.857] 15 ConvAddRelu /layer3/layer3.1/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00002000 0x00004000 0x00002000

D RKNN: [10:16:04.857] 15 ConvAddRelu /layer3/layer3.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00000000 0x00002000 0x00002000

D RKNN: [10:16:04.857] 16 ConvRelu /layer3/layer3.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00004000 0x00006000 0x00002000

D RKNN: [10:16:04.857] 17 Conv /layer4/layer4.0/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,128,4,4) (1,64,4,4,8) | 0x00000000 0x00001000 0x00001000

D RKNN: [10:16:04.857] 18 ConvAddRelu /layer3/layer3.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,64,8,8) (1,32,8,8,8) | 0x00004000 0x00006000 0x00002000

D RKNN: [10:16:04.857] 18 ConvAddRelu /layer4/layer4.0/conv2/Conv_output_0 FLOAT16 NC1HWC2 (1,128,4,4) (1,64,4,4,8) | 0x00001000 0x00002000 0x00001000

D RKNN: [10:16:04.857] 19 ConvRelu /layer4/layer4.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,128,4,4) (1,64,4,4,8) | 0x00000000 0x00001000 0x00001000

D RKNN: [10:16:04.857] 20 ConvAddRelu /layer4/layer4.1/relu/Relu_output_0 FLOAT16 NC1HWC2 (1,128,4,4) (1,64,4,4,8) | 0x00001000 0x00002000 0x00001000

D RKNN: [10:16:04.857] 20 ConvAddRelu /layer4/layer4.0/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,128,4,4) (1,64,4,4,8) | 0x00000000 0x00001000 0x00001000

D RKNN: [10:16:04.857] 21 Conv /layer4/layer4.1/relu_1/Relu_output_0 FLOAT16 NC1HWC2 (1,128,4,4) (1,64,4,4,8) | 0x00002000 0x00003000 0x00001000

D RKNN: [10:16:04.857] 22 Conv /avg_pool/GlobalAveragePool_output_0 FLOAT16 NC1HWC2 (1,128,1,1) (1,64,1,1,8) | 0x00000000 0x00000100 0x00000100

D RKNN: [10:16:04.857] 23 Reshape output_mm FLOAT16 NC1HWC2 (1,10,1,1) (1,8,1,1,8) | 0x00000100 0x00000120 0x00000020

D RKNN: [10:16:04.857] 24 OutputOperator output FLOAT16 UNDEFINED (1,10) (1,10) | 0x00000040 0x00000080 0x00000040

D RKNN: [10:16:04.857] -----------------------------------------------------------------------------------------------------------+---------------------------------

D RKNN: [10:16:04.857] ----------------------------------------------------------------------------------------------------------------

D RKNN: [10:16:04.857] Const Tensor Information Table

D RKNN: [10:16:04.857] ------------------------------------------------------------------------------+---------------------------------

D RKNN: [10:16:04.857] ID User Tensor DataType OrigShape | [Start End) Size

D RKNN: [10:16:04.857] ------------------------------------------------------------------------------+---------------------------------

D RKNN: [10:16:04.857] 1 ConvRelu onnx::Conv_197 FLOAT16 (16,3,3,3) | 0x00000a40 0x00001340 0x00000900

D RKNN: [10:16:04.857] 1 ConvRelu onnx::Conv_198 FLOAT (16) | 0x00001340 0x00001380 0x00000040

D RKNN: [10:16:04.857] 2 ConvRelu onnx::Conv_200 FLOAT16 (16,16,3,3) | 0x00001380 0x00002580 0x00001200

D RKNN: [10:16:04.857] 2 ConvRelu onnx::Conv_201 FLOAT (16) | 0x00002580 0x000025c0 0x00000040

D RKNN: [10:16:04.857] 3 ConvAddRelu onnx::Conv_203 FLOAT16 (16,16,3,3) | 0x000025c0 0x000037c0 0x00001200

D RKNN: [10:16:04.857] 3 ConvAddRelu onnx::Conv_204 FLOAT (16) | 0x000037c0 0x00003800 0x00000040

D RKNN: [10:16:04.857] 4 ConvRelu onnx::Conv_206 FLOAT16 (16,16,3,3) | 0x00003800 0x00004a00 0x00001200

D RKNN: [10:16:04.857] 4 ConvRelu onnx::Conv_207 FLOAT (16) | 0x00004a00 0x00004a40 0x00000040

D RKNN: [10:16:04.857] 5 ConvAddRelu onnx::Conv_209 FLOAT16 (16,16,3,3) | 0x00004a40 0x00005c40 0x00001200

D RKNN: [10:16:04.857] 5 ConvAddRelu onnx::Conv_210 FLOAT (16) | 0x00005c40 0x00005c80 0x00000040

D RKNN: [10:16:04.857] 6 ConvRelu onnx::Conv_212 FLOAT16 (32,16,3,3) | 0x00005c80 0x00008080 0x00002400

D RKNN: [10:16:04.857] 6 ConvRelu onnx::Conv_213 FLOAT (32) | 0x00008080 0x00008100 0x00000080

D RKNN: [10:16:04.857] 7 Conv onnx::Conv_215 FLOAT16 (32,32,3,3) | 0x00008100 0x0000c900 0x00004800

D RKNN: [10:16:04.857] 7 Conv onnx::Conv_216 FLOAT (32) | 0x0000c900 0x0000c980 0x00000080

D RKNN: [10:16:04.857] 8 ConvAddRelu onnx::Conv_218 FLOAT16 (32,16,3,3) | 0x0000c980 0x0000ed80 0x00002400

D RKNN: [10:16:04.857] 8 ConvAddRelu onnx::Conv_219 FLOAT (32) | 0x0000ed80 0x0000ee00 0x00000080

D RKNN: [10:16:04.857] 9 ConvRelu onnx::Conv_221 FLOAT16 (32,32,3,3) | 0x0000ee00 0x00013600 0x00004800

D RKNN: [10:16:04.857] 9 ConvRelu onnx::Conv_222 FLOAT (32) | 0x00013600 0x00013680 0x00000080

D RKNN: [10:16:04.857] 10 ConvAddRelu onnx::Conv_224 FLOAT16 (32,32,3,3) | 0x00013680 0x00017e80 0x00004800

D RKNN: [10:16:04.857] 10 ConvAddRelu onnx::Conv_225 FLOAT (32) | 0x00017e80 0x00017f00 0x00000080

D RKNN: [10:16:04.857] 11 ConvRelu onnx::Conv_227 FLOAT16 (64,32,3,3) | 0x00017f00 0x00020f00 0x00009000

D RKNN: [10:16:04.857] 11 ConvRelu onnx::Conv_228 FLOAT (64) | 0x00020f00 0x00021000 0x00000100

D RKNN: [10:16:04.857] 12 Conv onnx::Conv_230 FLOAT16 (64,64,3,3) | 0x00021000 0x00033000 0x00012000

D RKNN: [10:16:04.857] 12 Conv onnx::Conv_231 FLOAT (64) | 0x00033000 0x00033100 0x00000100

D RKNN: [10:16:04.857] 13 ConvAddRelu onnx::Conv_233 FLOAT16 (64,32,3,3) | 0x00033100 0x0003c100 0x00009000

D RKNN: [10:16:04.857] 13 ConvAddRelu onnx::Conv_234 FLOAT (64) | 0x0003c100 0x0003c200 0x00000100

D RKNN: [10:16:04.857] 14 ConvRelu onnx::Conv_236 FLOAT16 (64,64,3,3) | 0x0003c200 0x0004e200 0x00012000

D RKNN: [10:16:04.857] 14 ConvRelu onnx::Conv_237 FLOAT (64) | 0x0004e200 0x0004e300 0x00000100

D RKNN: [10:16:04.857] 15 ConvAddRelu onnx::Conv_239 FLOAT16 (64,64,3,3) | 0x0004e300 0x00060300 0x00012000

D RKNN: [10:16:04.857] 15 ConvAddRelu onnx::Conv_240 FLOAT (64) | 0x00060300 0x00060400 0x00000100

D RKNN: [10:16:04.857] 16 ConvRelu onnx::Conv_242 FLOAT16 (128,64,3,3) | 0x00060400 0x00084400 0x00024000

D RKNN: [10:16:04.857] 16 ConvRelu onnx::Conv_243 FLOAT (128) | 0x00084400 0x00084600 0x00000200

D RKNN: [10:16:04.857] 17 Conv onnx::Conv_245 FLOAT16 (128,128,3,3) | 0x00084600 0x000cc600 0x00048000

D RKNN: [10:16:04.857] 17 Conv onnx::Conv_246 FLOAT (128) | 0x000cc600 0x000cc800 0x00000200

D RKNN: [10:16:04.857] 18 ConvAddRelu onnx::Conv_248 FLOAT16 (128,64,3,3) | 0x000cc800 0x000f0800 0x00024000

D RKNN: [10:16:04.857] 18 ConvAddRelu onnx::Conv_249 FLOAT (128) | 0x000f0800 0x000f0a00 0x00000200

D RKNN: [10:16:04.857] 19 ConvRelu onnx::Conv_251 FLOAT16 (128,128,3,3) | 0x000f0a00 0x00138a00 0x00048000

D RKNN: [10:16:04.857] 19 ConvRelu onnx::Conv_252 FLOAT (128) | 0x00138a00 0x00138c00 0x00000200

D RKNN: [10:16:04.857] 20 ConvAddRelu onnx::Conv_254 FLOAT16 (128,128,3,3) | 0x00138c00 0x00180c00 0x00048000

D RKNN: [10:16:04.857] 20 ConvAddRelu onnx::Conv_255 FLOAT (128) | 0x00180c00 0x00180e00 0x00000200

D RKNN: [10:16:04.857] 21 Conv /avg_pool/GlobalAveragePool_2conv0_i1 FLOAT16 (1,128,4,4) | 0x00180e00 0x00181e00 0x00001000

D RKNN: [10:16:04.857] 21 Conv /avg_pool/GlobalAveragePool_2conv0_i2 FLOAT (128) | 0x00181e00 0x00182000 0x00000200

D RKNN: [10:16:04.857] 22 Conv fc.weight FLOAT16 (10,128,1,1) | 0x00000000 0x00000a00 0x00000a00

D RKNN: [10:16:04.857] 22 Conv fc.bias FLOAT (10) | 0x00000a00 0x00000a40 0x00000040

D RKNN: [10:16:04.857] 23 Reshape output_mm_tp_rs_i1 INT64 (2) | 0x00182000 0x00182010 0x00000010

D RKNN: [10:16:04.857] ------------------------------------------------------------------------------+---------------------------------

D RKNN: [10:16:04.858] ----------------------------------------

D RKNN: [10:16:04.858] Total Internal Memory Size: 102KB

D RKNN: [10:16:04.858] Total Weight Memory Size: 1546.06KB

D RKNN: [10:16:04.858] ----------------------------------------

D RKNN: [10:16:04.858] <<<<<<<< end: rknn::RKNNMemStatisticsPass

I rknn building done.

done

--> Export rknn model

done

--> Init runtime environment

I Target is None, use simulator!

done

--> Running model

W inference: The 'data_format' is not set, and its default value is 'nhwc'!

I GraphPreparing : 100%|██████████████████████████████████████████| 48/48 [00:00<00:00, 2080.57it/s]

I SessionPreparing : 100%|████████████████████████████████████████| 48/48 [00:00<00:00, 1241.42it/s]

W inference: The dims of input(ndarray) shape (32, 32, 3) is wrong, expect dims is 4! Try expand dims to (1, 32, 32, 3)!

[array([[ 1.6689453 , -4.8632812 , 2.4257812 , -1.5917969 , 2.5898438 ,

-1.71875 , -0.48266602, -2.0429688 , 1.4287109 , -3.7539062 ]],

dtype=float32)]

done

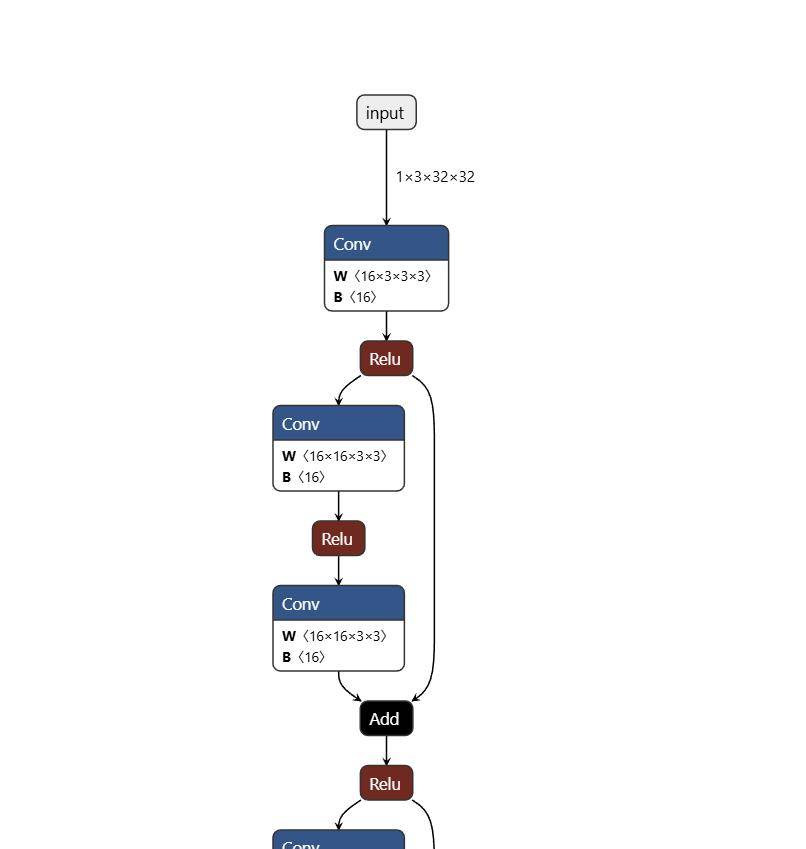

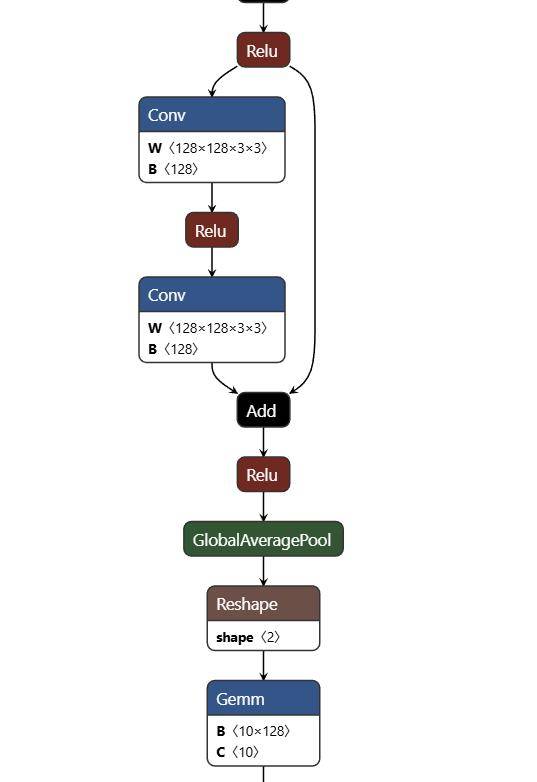

可以通过Netron打开模型文件,直观的查看模型结构。关注输入和输出

三 在rk3588上用rknnlite推理

import numpy as np

import cv2

import os

from rknnlite.api import RKNNLite

IMG_PATH = '2_67.jpg'

RKNN_MODEL = './resnet18.rknn'

img_height = 32

img_width = 32

class_names = ["plane","car","bird","cat","deer","dog","frog","horse","ship","truck"]

# Create RKNN object

rknn_lite = RKNNLite()

# load RKNN model

print('--> Load RKNN model')

ret = rknn_lite.load_rknn(RKNN_MODEL)

if ret != 0:

print('Load RKNN model failed')

exit(ret)

print('done')

# Init runtime environment

print('--> Init runtime environment')

ret = rknn_lite.init_runtime()

if ret != 0:

print('Init runtime environment failed!')

exit(ret)

print('done')

# load image

img = cv2.imread(IMG_PATH)

img = cv2.resize(img,(32,32))

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

img = np.expand_dims(img, 0)

# runing model

print('--> Running model')

outputs = rknn_lite.inference(inputs=[img])

print("result: ", outputs)

print(

"This image most likely belongs to {}."

.format(class_names[np.argmax(outputs)])

)

rknn_lite.release()

其中图为

结果是鸟。

W rknn-toolkit-lite2 version: 2.3.2

--> Load RKNN model

done

--> Init runtime environment

I RKNN: [21:46:37.504] RKNN Runtime Information, librknnrt version: 2.3.2 (429f97ae6b@2025-04-09T09:09:27)

I RKNN: [21:46:37.504] RKNN Driver Information, version: 0.9.8

I RKNN: [21:46:37.504] RKNN Model Information, version: 6, toolkit version: 2.3.2(compiler version: 2.3.2 (@2025-04-03T08:26:16)), target: RKNPU v2, target platform: rk3588, framework name: ONNX, framework layout: NCHW, model inference type: static_shape

W RKNN: [21:46:37.508] query RKNN_QUERY_INPUT_DYNAMIC_RANGE error, rknn model is static shape type, please export rknn with dynamic_shapes

W Query dynamic range failed. Ret code: RKNN_ERR_MODEL_INVALID. (If it is a static shape RKNN model, please ignore the above warning message.)

done

--> Running model

result: [array([[ 0.96533203, -5.40625 , 2.234375 , -0.48876953, 1.7158203 , -1.5136719 , -1.0996094 , -1.6660156 , 0.83447266, -3.6992188 ]], dtype=float32)]

This image most likely belongs to bird.四 基于RKNPU2 c接口推理

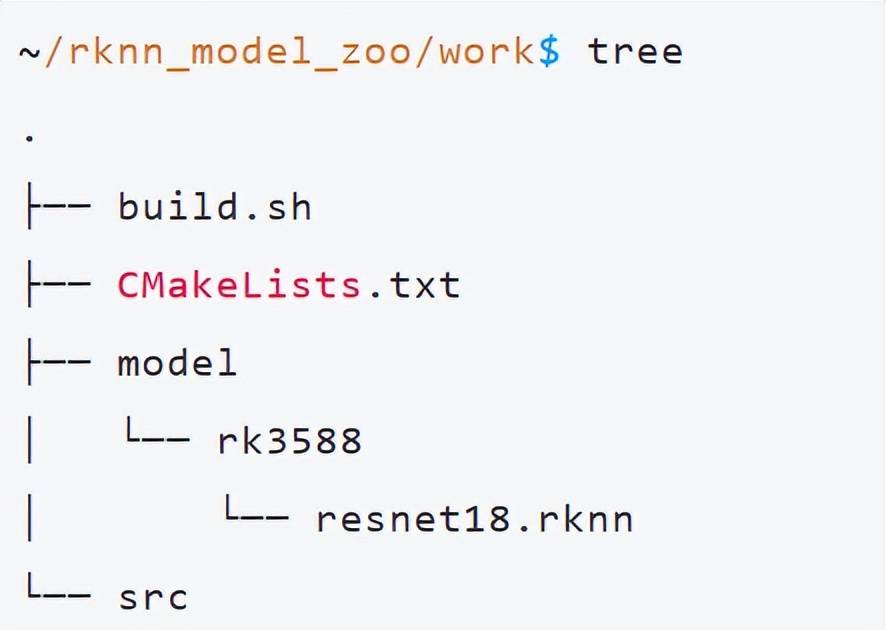

基于rockchip的rknn_model_zoo,创建work目录。

build.sh编译脚本如下:

#!/bin/bash

set -e

ROOT_PWD=$( cd "$( dirname $0 )" && cd -P "$( dirname "$SOURCE" )" && pwd )

# for aarch64

GCC_COMPILER=~/gcc-linaro-7.5.0-2019.12-x86_64_aarch64-linux-gnu/bin/aarch64-linux-gnu

# build

BUILD_DIR=${ROOT_PWD}/build/build_linux_aarch64

if [[ ! -d "${BUILD_DIR}" ]]; then

mkdir -p ${BUILD_DIR}

fi

cd ${BUILD_DIR}

cmake ../../

-DCMAKE_C_COMPILER=${GCC_COMPILER}-gcc

-DCMAKE_CXX_COMPILER=${GCC_COMPILER}-g++

make -j4

make install

cd -

CMakeLists.txt

# CMake需要的最低版本号是3.4.1

cmake_minimum_required(VERSION 3.4.1)

# 指定了项目的名称为resnet18

project(resnet18)

# 不使用任何C编译器标志,使用C++11标准进行编译

set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS}")

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11")

# rknn api

set(RKNN_API_PATH ${CMAKE_SOURCE_DIR}/../3rdparty/rknpu2) # 该变量定义了RKNN API库的路径

set(LIB_ARCH aarch64)

set(RKNN_RT_LIB ${RKNN_API_PATH}/Linux/${LIB_ARCH}/librknnrt.so) # 该变量定义了RKNN运行时库的路径。

include_directories(${RKNN_API_PATH}/include) # 添加RKNN API的头文件

# opencv

set(OpenCV_DIR ${CMAKE_SOURCE_DIR}/../3rdparty/opencv/opencv-linux-aarch64/share/OpenCV) # 指定了OpenCV库的路径。

find_package(OpenCV REQUIRED) # 自动查找OpenCV库,并将相关信息保存在CMake内置变量中

# 指定了程序运行时查找动态链接库的路径

set(CMAKE_INSTALL_RPATH "lib")

# 创建一个可执行文件

add_executable(${PROJECT_NAME}

src/main.cc

)

# 指定工程需要链接的库文件

target_link_libraries(${PROJECT_NAME}

${RKNN_RT_LIB}

${OpenCV_LIBS}

)

# 指定了程序安装的路径

set(CMAKE_INSTALL_PREFIX ${CMAKE_SOURCE_DIR}/install/${PROJECT_NAME}_${CMAKE_SYSTEM_NAME})

# 拷贝可执行程序和需要用的库以及后面测试要用到的model测试文件

install(TARGETS ${PROJECT_NAME} DESTINATION ./)

install(DIRECTORY model DESTINATION ./)

install(PROGRAMS ${RKNN_RT_LIB} DESTINATION lib)

main.cc

#include <stdio.h>

#include "rknn_api.h"

#include "opencv2/core/core.hpp"

#include "opencv2/imgcodecs.hpp"

#include "opencv2/imgproc.hpp"

#include <string.h>

using namespace cv;

static int rknn_GetTop(float* pfProb, float* pfMaxProb, uint32_t* pMaxClass, uint32_t outputCount, uint32_t topNum)

{

uint32_t i, j;

#define MAX_TOP_NUM 20

if (topNum > MAX_TOP_NUM)

return 0;

memset(pfMaxProb, 0, sizeof(float) * topNum);

memset(pMaxClass, 0xff, sizeof(float) * topNum);

for (j = 0; j < topNum; j++) {

for (i = 0; i < outputCount; i++) {

if ((i == *(pMaxClass + 0)) || (i == *(pMaxClass + 1)) || (i == *(pMaxClass + 2)) || (i == *(pMaxClass + 3)) || (i == *(pMaxClass + 4))) {

continue;

}

if (pfProb[i] > *(pfMaxProb + j)) {

*(pfMaxProb + j) = pfProb[i];

*(pMaxClass + j) = i;

}

}

}

return 1;

}

int main(int argc, char *argv[])

{

/*要求程序传入的第一个参数为RKNN模型、第二个参数为要推理的图片*/

char *model_path = argv[1];

char *image_path = argv[2];

/*调用rknn_init接口将RKNN硬性的运行环境和相关信息赋予到context变量中*/

rknn_context context;

rknn_init(&context, model_path, 0, 0, NULL);

/*使用opencv读取要推理的图像数据*/

cv::Mat img = cv::imread(image_path);

cv::cvtColor(img, img, cv::COLOR_BGR2RGB);

/*调用rknn_query接口查询tensor输入输出个数*/

rknn_input_output_num io_num;

rknn_query(context, RKNN_QUERY_IN_OUT_NUM, &io_num, sizeof(io_num));

printf("model input num: %d, output num: %d

", io_num.n_input, io_num.n_output);

/*调用rknn_inputs_set接口设置输入数据*/

rknn_input input[1];

memset(input, 0, sizeof(rknn_input));

input[0].index = 0;

input[0].buf = img.data;

input[0].size = img.rows * img.cols * img.channels() * sizeof(uint8_t);

printf("rows %d",img.rows);

printf("cols %d",img.cols);

input[0].pass_through = 0;

input[0].type = RKNN_TENSOR_UINT8;

input[0].fmt = RKNN_TENSOR_NHWC;

rknn_inputs_set(context, 1, input);

/*调用rknn_run接口进行模型推理了*/

rknn_run(context, NULL);

/*调用rknn_outputs_get接口获取模型推理结果*/

rknn_output output[1];

memset(output, 0, sizeof(rknn_output));

output[0].index = 0;

output[0].is_prealloc = 0;

output[0].want_float = 1;

rknn_outputs_get(context, 1, output, NULL);

// Post Process

for (int i = 0; i < io_num.n_output; i++)

{

uint32_t MaxClass[5];

float fMaxProb[5];

float* buffer = (float*)output[i].buf;

uint32_t sz = output[i].size / 4;

for(int i=0; i<10;i++)

{

printf("result %f", buffer[i]);

}

rknn_GetTop(buffer, fMaxProb, MaxClass, sz, 5);

printf(" --- Top5 ---

");

for (int i = 0; i < 5; i++) {

printf("%3d: %8.6f

", MaxClass[i], fMaxProb[i]);

}

}

/*调用rknn_outputs_release接口释放推理输出相关的资源*/

rknn_outputs_release(context, 1, output);

/*调用rknn_destroy 接口销毁context变量*/

rknn_destroy(context);

return 0;

}

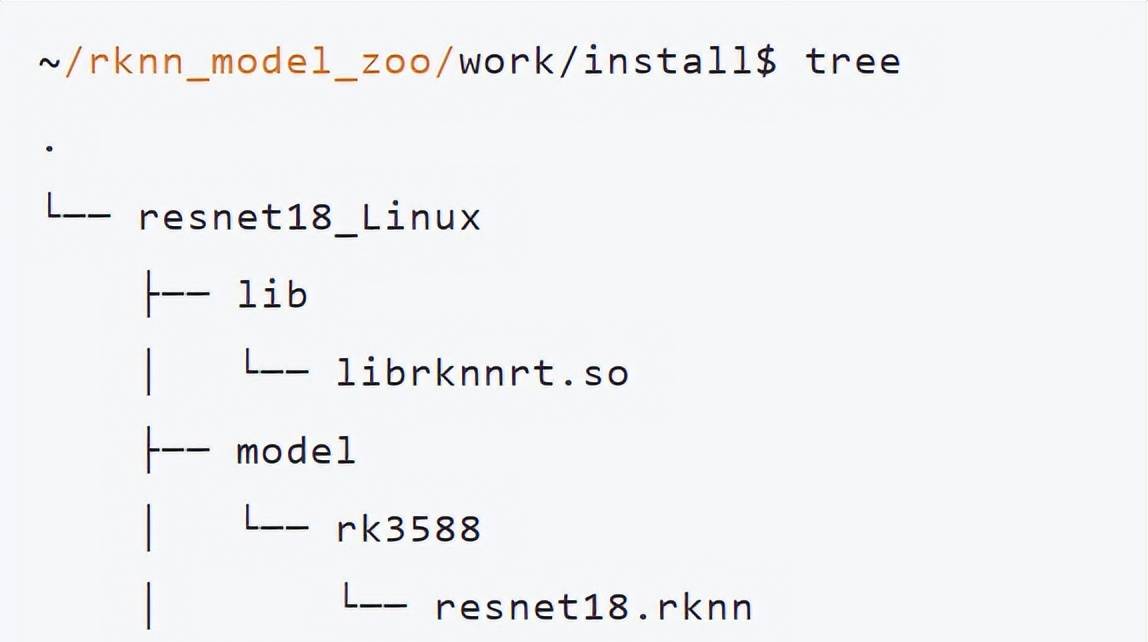

运行build.sh编译,结果在当前目录的install目录下

把resnet18_Linux目录下所有拷贝到rk3588的userdata目录。

cd /userdata/resnet18_Linux

export LD_LIBRARY_PATH=./lib

./resnet18 model/rk3588/resnet18.rknn 2_67.jpg结果如下:也得到正确结果。

/userdata/resnet18_Linux$ ./resnet18 model/rk3588/resnet18.rknn 2_67.jpg

model input num: 1, output num: 1

--- Top5 ---

2: 2.234375

4: 1.715820

0: 0.965332

8: 0.834473

-1: 0.000000

以上,展示了整个resnet18从构建,训练到最后在rk3588部署整个流程。实际训练很更多轮,以达到更好的效果。

© 版权声明

文章版权归作者所有,未经允许请勿转载。

相关文章

暂无评论...